Design of Sensor Signal Processing with ForSyDe

version 0.3.1

This document serves as a report and as a step-by-step tutorial for modeling, simulating, testing and synthesizing complex heterogeneous systems in ForSyDe, with special focus on parallel and concurrent systems. The application under test is a radar signal processing chain for an active electronically scanned array (AESA) antenna provided by Saab AB. Throughout this report the application will be modeled using several different frameworks, gradually introducing new modeling concepts and pointing out similarities and differences between them.

There is also a PDF version of this report. The source code used in this report is found at this GitHub repository.

1 Introduction

In order to develop more cost-efficient implementation methods for complex systems, we need to understand and exploit the inherent properties derived from the specification of the target applications and, based on these properties, be able to explore the design space offered by alternative platforms. Such is the case of the application studied in this report: the active electronically scanned array (AESA) radar is a versatile system that is able to determine both position and direction of incoming objects, however critical parts of its signal processing has significant demands on processing and memory bandwidth, making it well out-of reach from the general public usage. We believe that a proper understanding of the temporal and spatial properties of the signal processing chain can lead to a better exploration of alternative solutions, ideally making it an affordable appliance in the context of current technology limitations. Nevertheless, expressing behaviors and (extra-functional) properties of systems in a useful way is far from a trivial task and it involves respecting some key principles:

- the language(s) chosen to represent the models need(s) to be formally defined and unambiguous to be able to provide a solid foundation for analysis and subsequent synthesis towards implementation.

- the modeling paradigm should offer the right abstraction level for capturing the needed properties (Lee 2015). An improper model might either abstract away essential properties or over-specify them in a way that makes analysis impossible. In other words it is the engineer’s merit to find the right model for the right “thing being modeled”.

- the models, at least during initial development stages, need to be deterministic with regard to defining what correct behavior is (Lee 2018).

- at a minimum, the models need to be executable in order to verify their conformance with the system specification. Ideally they should express operational semantics which are traceable across abstraction levels, ultimately being able to be synthesized on the desired platform (Sifakis 2015).

ForSyDe is a design methodology which envisions “correct-by-construction system design” through formal or rigorous methods. Its associated modeling frameworks offer means to tackle the challenges enumerated above by providing well-defined composable building blocks which capture extra-functional properties in unison with functional ones. ForSyDe-Shallow is a domain specific language (DSL) shallow-embedded in the functional programming language Haskell, meaning that it can be used only for modeling and simulation purposes. It introduced the concept of process constructors (Sander and Jantsch 2004) as building blocks that capture the semantics of computation, concurrency and synchronization as dictated by a certain model of computation (MoC). ForSyDe-Atom is also a shallow-embedded (set of) DSL which extends the modeling concepts of ForSyDe-Shallow to systematically capture the interacting extra-functional aspects of a system in a disciplined way as interacting layers of minimalistic languages of primitive operations called atoms (Ungureanu and Sander 2017). ForSyDe-Deep is a deep-embedded DSL implementing a synthesizable subset of ForSyDe, meaning that it can parse the structure of process networks written in this language and operate on their abstract syntax: either simulate them or further feed them to design flows. Currently ForSyDe-Deep is able to generate GraphML structure files and synthesizable VHDL code.

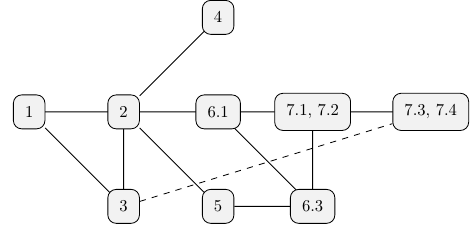

This documents presents alternatives ways to modelling the AESA radar signal processing chain and, using these models, gradually introducing one concept at a time and pointing towards reference documentation. The final purpose is to refine, synthesize, and replace parts of the behavioral model down to VHDL implementation on FPGA hardware platforms, and co-simulate these design artifacts along with the initial high-level model. The report itself is written using literate programming, which means that all code snippets contained are actual compiled code alternating with documentation text. Following the report might be difficult without some initial clarification. The remaining parts of section 1 will present a guide to using this document, as well as an introduction to the AESA application. In section 2 a high-level, functionally complete ForSyDe-Atom model of the application is thoroughly presented with respect to the specification, and tested against a set of known input data. In section 3 an equivalent model written in ForSyDe-Shallow is briefly presented and tested, to show the main similarities and differences between the two modeling APIs. In section 4 we model the radar environment as describing the object reflections, represented by continuous signals in time subjected to white noize. In section 5 is introduced the concept of property checking for the purpose of validation of ForSyDe designs. We formulate a set of properties in the QuicCheck DSL for each component of the AESA model which are validated against a number of randomly-generated tests. In section 6.1 we focus on refining the behavior of the initial (high-level) specification model to lower level ones, more suitable for (backend) implementation synthesis, followed by section 6.3 where we formulate new properties for validating some of these refinements. All refinements in section 6 happen in the domain(s) of the ForSyDe-Atom DSL. In section 7 we switch the DSL to ForSyDe-Deep, which benefits from automatic synthesis towards VHDL: in each subsection the refinements are stated, the refined components are modeled, properties are formulated to validate them, and in sections 7.3, 7.4 VHDL code is generated and validated.

Figure 1 depicts a reading order suggestion, based on information dependencies. The dashed line between section 3 and sections 7.3, 7.4 suggests that understanding the latter is not directly dependent on the former, but since ForSyDe-Deep syntax is derived from ForSyDe-Shallow, it is recommended to get acquainted with the ForSyDe-Shallow syntax and its equivalence with the ForSyDe-Atom syntax.

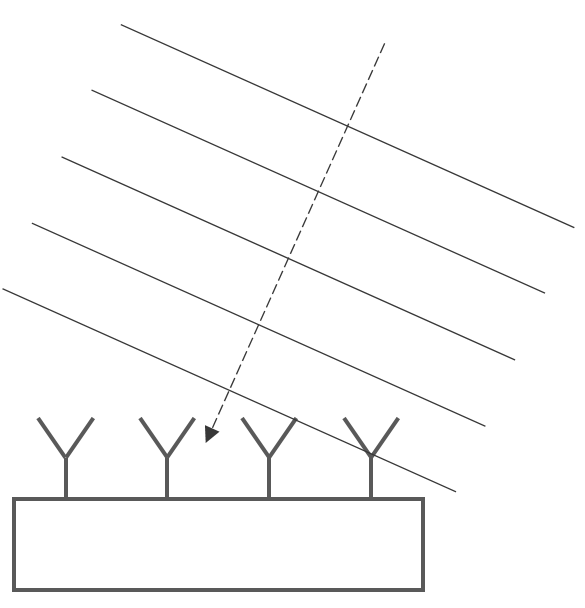

1.1 Application Specification

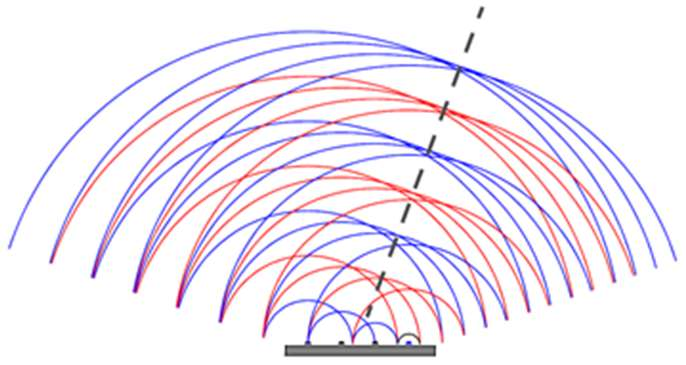

An AESA, see picture below, may consist of thousands of antenna elements. The relative phases of the pulses of the antenna’s different antenna elements can be set to create a constructive interference in the chosen main lobe bearing. In this way the pointing direction can be set without any moving parts. When receiving, the direction can be steered by following the same principle, as seen the Digital Beam Former below. One of the main advantages of the array antennas is the capacity to extract not only temporal but also spatial information, i.e. the direction of incoming signals.

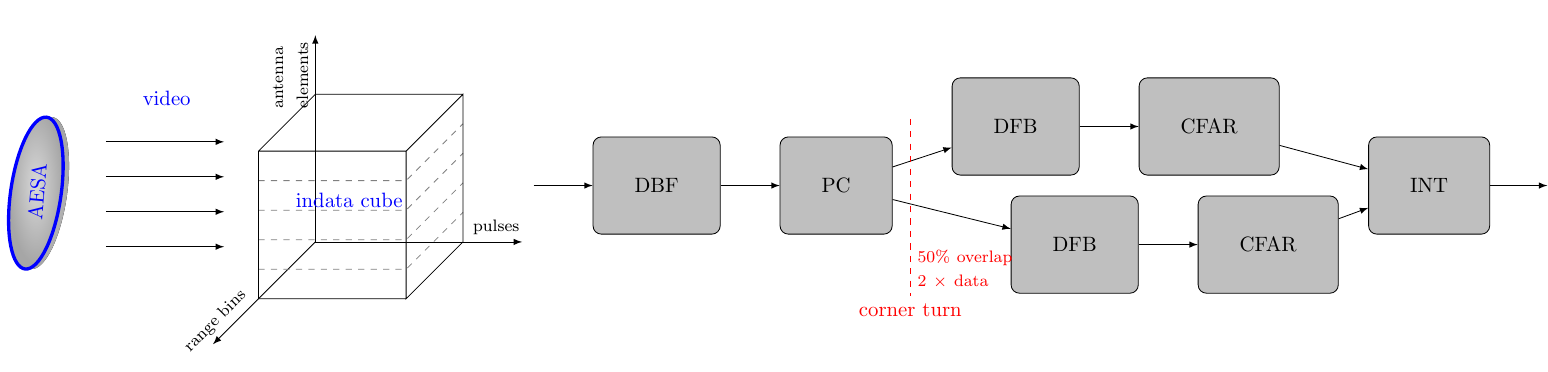

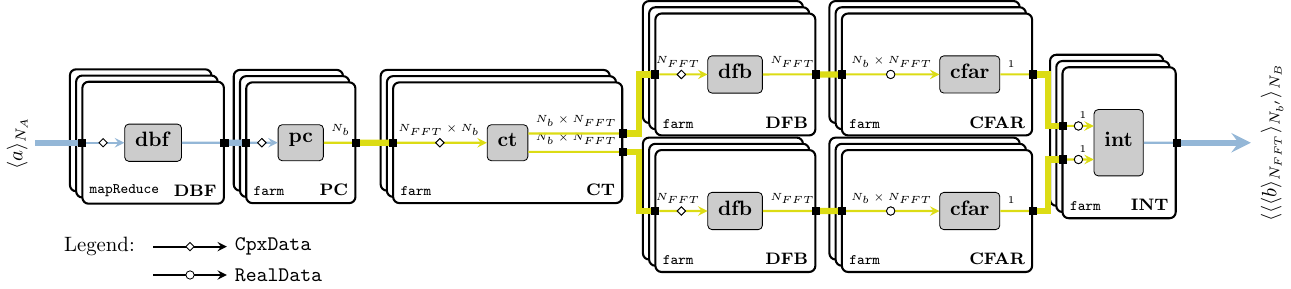

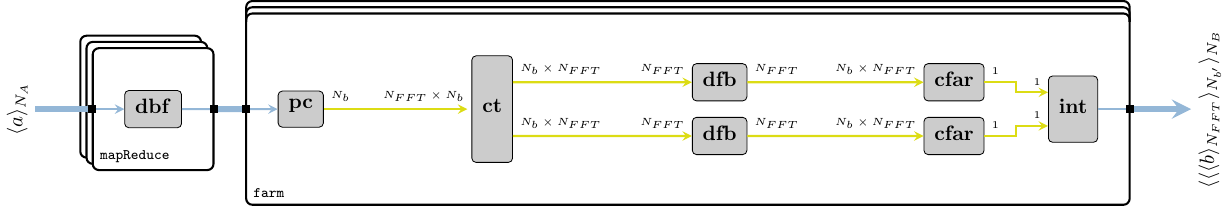

Figure 2 shows a simplified radar signal processing chain that is used to illustrate the calculations of interest. The input data from the antenna is processed in a number of steps.

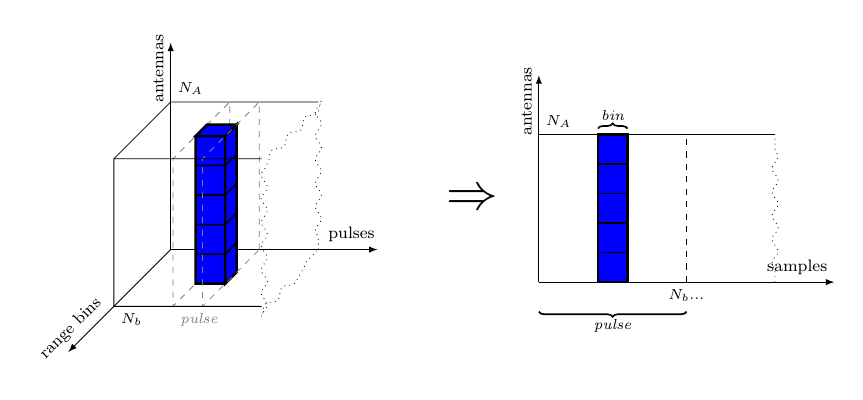

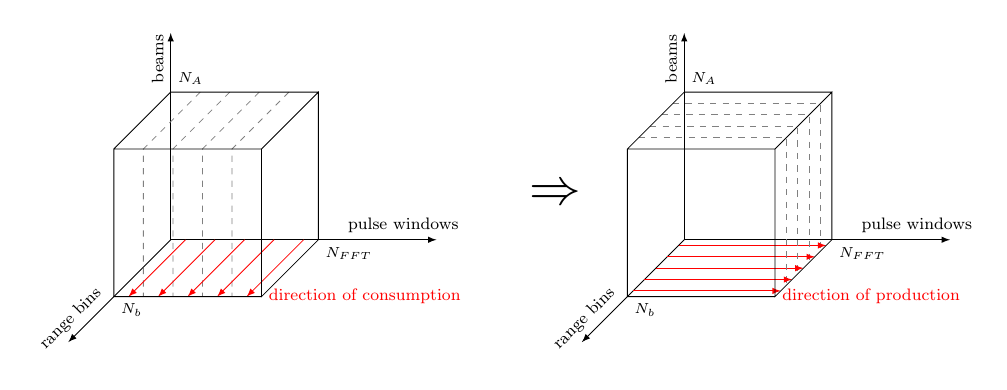

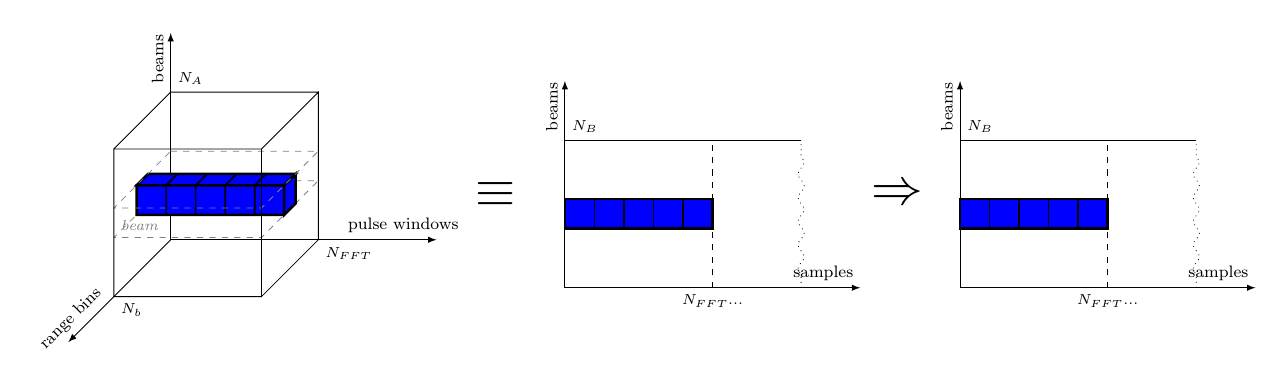

In this report we assume one stream per antenna element. The indata is organized into a sequence of “cubes”, each corresponding to a certain integration interval. Each sample in the cube represents a particular antenna element, pulse and range bin. The data of the cube arrives pulse by pulse and each pulse arrives range bin by range bin. This is for all elements in parallel. Between the Pulse Compression (PC) and Doppler Filter Bank (DFB) steps there is a corner turn of data, i.e. data from all pulses must be collected before the DFB can execute.

The different steps of the chain, the antenna and the input data format are briefly described in the following list. For a more detailed description of the processing chain, please refer to section 2.

Digital Beam Forming (DBF): The DBF step creates a number of simultaneous receiver beams, or “listening directions”, from the input data. This is done by doing weighted combinations of the data from the different antenna elements, so that constructive interference is created in the desired bearings of the beams. The element samples in the input data set are then transformed into beams.

Pulse Compression (PC): The goal of the pulse compression is to collect all received energy from one target into a single range bin. The received echo of the modulated pulse is passed through a matched filter. Here, the matched filtering is done digitally.

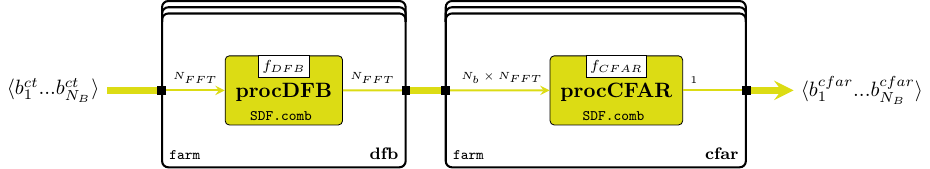

Doppler Filter Bank incl. envelope detection (DFB): The DFB gives an estimation of the target’s speed relative to the radar. It also gives an improved signal-to-noise ratio due to a coherent integration of indata. The pulse bins in the data set are transformed into Doppler channels. The envelope detector calculates the absolute values of the digital samples. The data is real after this step.

Constant False Alarm Ratio (CFAR): The CFAR processing is intended to keep the number of false targets at an acceptable level while maintaining the best possible sensitivity. It normalizes the video in order to maintain a constant false alarm rate when the video is compared to a detection threshold. With this normalization the sensitivity will be adapted to the clutter situation in the area (around a cell under test) of interest.

Integrator (INT) The integrator is an 8-tap unsigned integrator that integrates channel oriented video. Integration shall be done over a number of FFT batches of envelope detected video. Each Doppler channel, range bin and antenna element shall be integrated.

The following table briefly presents the dimensions of the primitive functions associated with each processing stage, where data sets are measured in number of elements processed organized in channels range bins pulses; data precision is measured in bit width; and approximative performance is given in MOPS. These dimensions represent the scaled-down version of a realistic AESA radar signal processing system, with 16 input antenna channels forming into 8 beams.

| Block | In Data Set | Out Data Set | Type In | Out | Precision In | Out | Approx. perform. |

|---|---|---|---|---|---|---|---|

| DBF | 16 | 20 | 2688 | ||||

| PC | 20 | 20 | 4608 | ||||

| DFB | 20 | 20 | 7680 | ||||

| CFAR | 20 | 16 | 360 | ||||

| INT | 16 | 16 | 24576 | ||||

| AESA | – | – | 39912 |

1.2 Using This Document

PREREQUISITES: the document assumes that the reader is familiar with the functional programming language Haskell, its syntax, and the usage of a Haskell interpreter (e.g. ghci). Otherwise, we recommend consulting at least the introductory chapters of one of the following books by Lipovača (2011) and Hutton (2016) or other recent books in Haskell. The reader also needs to be familiar with some basic ForSyDe modeling concepts, such as process constructor, process or signal. We recommend going through at least the online getting started tutorial on ForSyDe-Shallow or the one on ForSyDe-Atom, and if possible, consulting the (slightly outdated) book chapter on ForSyDe (Sander, Jantsch, and Attarzadeh-Niaki 2017).

This document has been created using literate programming. This means that all code shown in the listings is compilable and executable. There are two types of code listing found in this document. This style

shows source code as it is found in the implementation files, where the line numbers correspond to the position in the source file. This style

Prelude> 1 + 1

2

suggests interactive commands given by the user in a terminal or an interpreter session. The listing above shows a typical ghci session, where the string after the prompter symbol > suggests the user input (e.g. 1 + 1). Whenever relevant, the expected output is printed one row below (e.g. 2).

The way this document is meant to be parsed efficiently is to load the source files themselves in an interpreter and test the exported functions gradually, while reading the document at the same time. Due to multiple (sometimes conflicting) dependencies on external packages, for convenience the source files are shipped as multiple Stack packages each creating an own sandbox on the user’s local machine with all dependencies and requirements taken care of. Please refer to the project’s README file for instructions on how to install and compile or run the Haskell files.

At the beginning of each chapter there is meta-data guiding the reader what tools and packages are used, like:

| Package | aesa-atom-0.1.0 | path: ./aesa-atom/README.md |

This table tells that the package where the current chapter’s code resides is aesa-atom, version 0.1.0. This table might contain information on the main dependency packages which contain important functions, and which should be consulted while reading the code in the current chapter. If the main package generates an executable binary or a test suite, these are also pointed out. The third column provides additional information such as a pointer to documentation (relative path to the project root, or web URL), or usage suggestions.

It is recommended to read the main package’s README file which contains instructions on how to install, compile and test the software, before proceeding with following a chapter. Each section of a chapter is written within a library module, pointed out in the beginning of the respective section by the line:

The most convenient way to test out all functions used in module ForSyDe.X.Y is by loading its source file in the sandboxed interpreter, i.e. by running the following command from the project root:

stack ghci src/ForSyDe/X/Y.lhs

An equally convenient way is to create an own .hs file somewhere under the project root, which imports and uses module ForSyDe.X.Y, e.g.

This file can be loaded and/or compiled from within the sandbox, e.g. with stack ghci MyTest.hs.

2 High-Level Model of the AESA Signal Processing Chain in ForSyDe-Atom

This section guides the reader throughout translating “word-by-word” the provided specifications of the AESA signal processing chain into a concrete, functionally complete, high-level executable model in ForSyDe-Atom. This first attempt focuses mainly on the top-level functional behavior of the system, exerting the successive transformations upon the input video cubes, as suggested by Figure 2. We postpone the description/derivation of more appropriate time behaviors for later sections. Enough AESA system details are given in order to understand the model. At the end of this section we simulate this model against a realistic input set of complex antenna data, and test if it is sane (i.e. provides the expected results).

| Package | aesa-atom-0.1.0 | path: ./aesa-atom/README.md |

| Deps | forsyde-atom-0.3.1 | url: https://forsyde.github.io/forsyde-atom/api/ |

| Bin | aesa-cube | usage: aesa-cube --help |

Historically, ForSyDe-Atom has been a spin-off of ForSyDe-Shallow which has explored new modeling concepts, and had a fundamentally different approach to how models are described and instantiated. ForSyDe-Atom introduced the concept of language layer, extending the idea of process constructor, and within this setting it explored the algebra of algorithmic skeletons, employed heavily within this report. As of today, both ForSyDe-Shallow and ForSyDe-Atom have similar APIs and are capable of modeling largely the same behaviors. The main syntax differences between the two are covered in section 3, where the same high-level model is written in ForSyDe-Shallow.

2.1 Quick Introduction to ForSyDe-Atom

Before proceeding with the modeling of the AESA processing chain, let us consolidate the main ForSyDe concepts which will be used throughout this report: layer, process constructor and skeleton. If you are not familiar with ForSyDe nomenclature and general modeling principles, we recommend consulting the documentation pointed out in section 1 first.

As seen in section 1.Application Specification, most of the AESA application consists of typical DSP algorithms such as matrix multiplications, FFT, moving averages, etc. which lend themselves to streaming parallel implementations. Hence we need to unambiguously capture the two distinct aspects of the AESA chain components:

streaming behavior is expressed in ForSyDe through processes which operate on signals encoding a temporal partitioning of data. Processes are instantiated exclusively using process constructors which can be regarded as templates inferring the semantics of computation, synchronization and concurrency as dictated by a certain model of computation (MoC) (Sander and Jantsch (2004),Edward A. Lee and Seshia (2016)).

parallelism is expressed through parallel patterns which operate on structured types (e.g. vectors) encoding a spatial partitioning of data. These patterns are instantiated as skeletons, which are templates inferring the semantics of distribution, parallel computation and interaction between elements, as defined by the algebra of catamorphisms (Fischer, Gorlatch, and Bischof 2003).

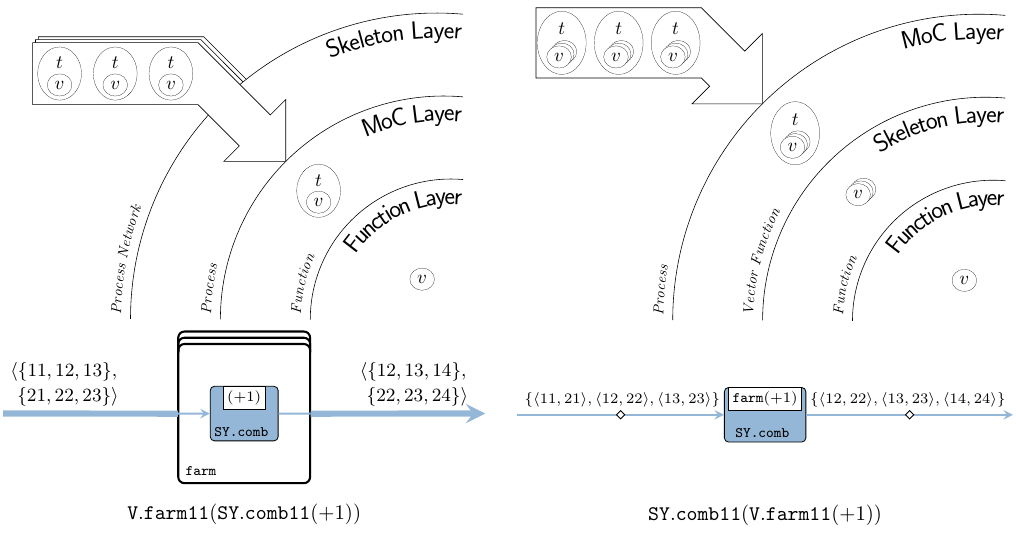

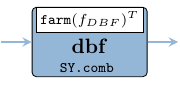

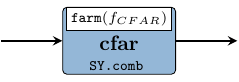

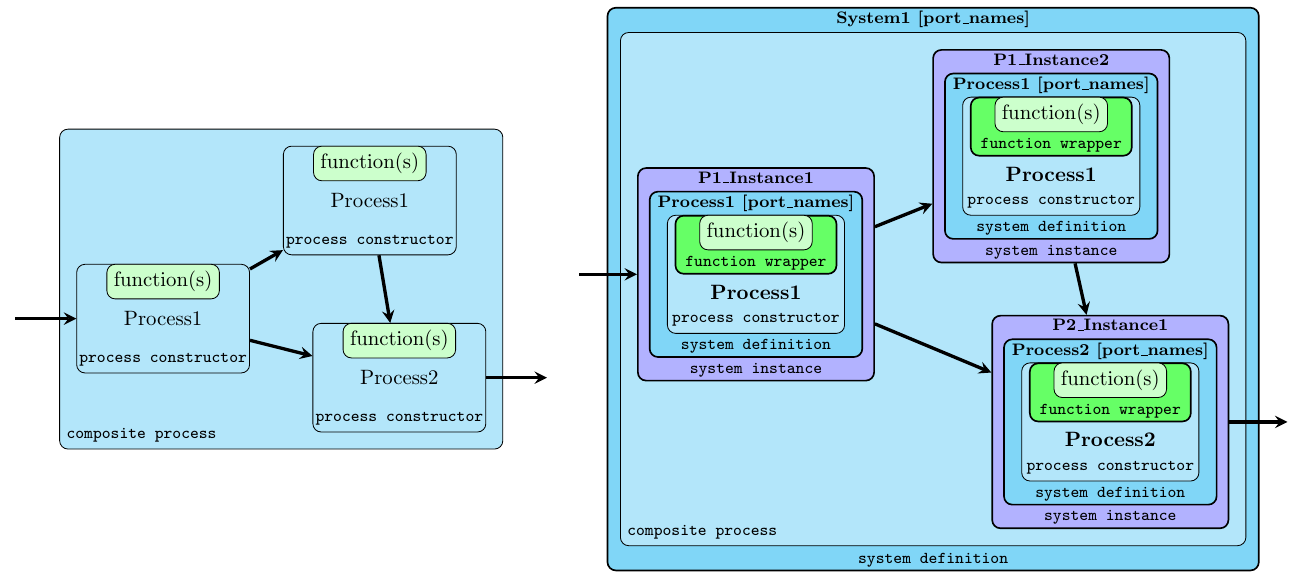

In order to capture these two1 interacting aspects in unison as a complete system, we describe them in terms of two distinct, orthogonal layers within the ForSyDe-Atom framework. A language layer is a (very small) domain specific language (DSL) heavily rooted in the functional programming paradigm, which is able to describe one aspect of a cyber-physical system (CPS). Layers interact with one another by virtue of the abstraction principle inherent to a functional programming language (Backus 1978): each layer defines at least one higher order function that is able to take a function (i.e. abstraction) from another layer as an argument and to lift it within its own domain. To picture the interaction between layers, consider Figure 2 where, although we use the same farm vector skeleton and comb synchronous (SY) process constructor, the two different compositions describe two different (albeit semantically equivalent) systems: the first one is instantiating a farm network of SY processes operating on a vector of signals in parallel; whereas the second one is instantiating a single SY process operating on o signal where each event is carrying a vector of values.

2.2 A High-Level Model

This section presents a high-level behavioral model of the AESA signal processing chain presented in section 1.Application Specification. This model follows intuitive and didactic way to tackle the challenge of translating the textual specifications into an executable ForSyDe specification and is not, in any circumstance, the only way to model the application. As of section 2.1 we represent each stage in the AESA chain as a process acting upon (complete) cubes of data, i.e. as processes of skeleton functions. At this phase in the system design we do not care how the cubes are being formed or how the data is being carried around, bur rather on what transformations are applied on the data cubes during each subsequent stage of the AESA pipeline. The purpose of the model is to provide a executable reference for the AESA system functionality, that can later be derived to more efficient descriptions.

The code for this section is written in the following module, see section 1.2 on how to use it:

As the AESA application uses complex numbers, we use Haskell’s Complex type.

The only timed behavior exerted by the model in this section is the causal, i.e. ordered, passing of cubes from one stage to another. In order to enable a simple, abstract, and thus analyzable “pipeline” behavior this passing can be described according to the perfect synchrony hypothesis, which assumes the processing of each event (cube) takes an infinitely small amount of time and it is ready before the next synchronization point. This in turn implies that all events in a system are synchronized, enabling the description of fully deterministic behaviors over infinite streams of events. These precise execution semantics are captured by the synchronous reactive (SY) model of computation (MoC) (Edward A. Lee and Seshia (2016),Benveniste et al. (2003)), hence we import the SY library from the MoC layer of ForSyDe-Atom, see (Ungureanu and Sander 2017), using an appropriate alias.

import ForSyDe.Atom.MoC.SY as SYFor describing parallel operations on data we use algorithmic skeletons (Fischer, Gorlatch, and Bischof (2003),Skillicorn (2005)), formulated on ForSyDe-Atom’s in-house Vector data type, which is a shallow, lazy-evaluated implementation of unbounded arrays, ideal for early design validation. Although dependent, bounded, and even boxed (i.e. memory-mapped) alternatives exist, such as FSVec or REPA Arrays, for the scope of this project the functional validation and (by-hand) requirement analysis on the properties of skeletons will suffice. We also import the Matrix and Cube utility libraries which contain type synonyms for nested Vectors along with their derived skeletons, as well a DSP which contain commonly used DSP blocks defined in terms of vector skeletons.

import ForSyDe.Atom.Skel.FastVector as V

import ForSyDe.Atom.Skel.FastVector.Matrix as M

import ForSyDe.Atom.Skel.FastVector.Cube as C

import ForSyDe.Atom.Skel.FastVector.DSPFinally, we import the local project module defining different coefficients for the AESA algorithms, presented in detail in section 2.2.Coefficients.

2.2.1 Type Aliases and Constants

The system parameters are integer constants defining the size of the application. For a simple test scenario provided by Saab AB, we have bundled these parameters in the following module, and we shall use their variable names throughout the whole report:

For ease of documentation we will be using type synonyms (aliases) for all types and structures throughout this design:

Antennadenotes a vector container for the antenna elements. Its length is equal to the number of antennas in the radar .After Digital Beamforming (DBF), the antenna elements are transformed into beams, thus we associate the

Beamalias for the vector container wrapping those beams.Rangeis a vector container for range bins. All antennas have the same number of range bins , rendering each a perfect matrix of samples for every pulse.Windowstands for a Doppler window of pulses.

type Antenna = Vector -- length: nA

type Beam = Vector -- length: nB

type Range = Vector -- length: nb

type Window = Vector -- length: nFFTFinally we provide two aliases for the basic Haskell data types used in the system, to stay consistent with the application specification.

2.2.2 Video Processing Pipeline Stages

In this section we follow each stage described in section 1.Application Specification, and model them as a processes operating on cubes (three-dimensional vectors) of antenna samples.

2.2.2.1 Digital Beamforming (DFB)

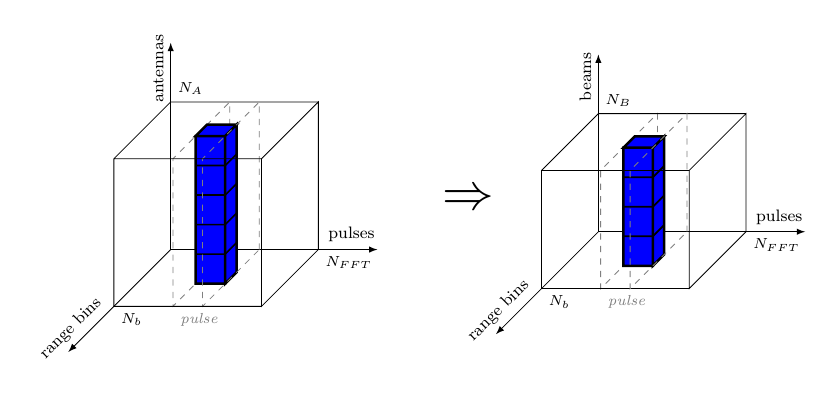

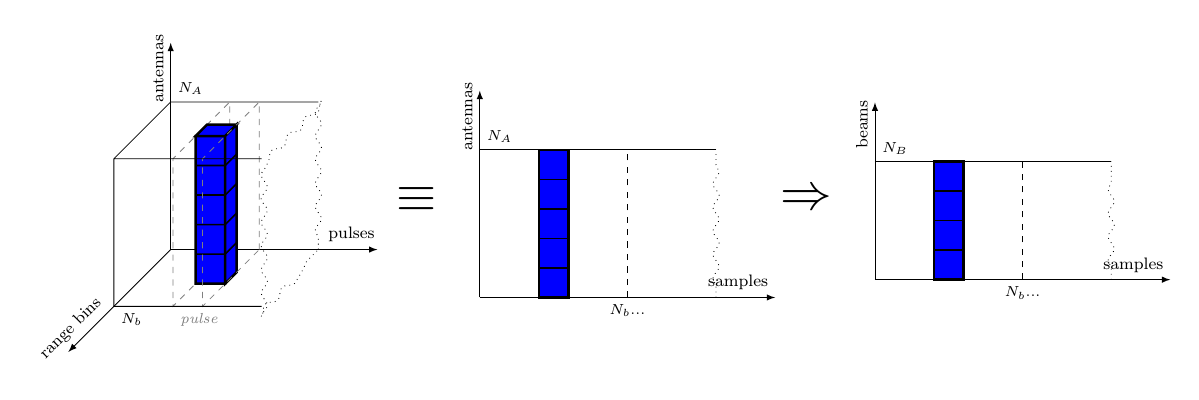

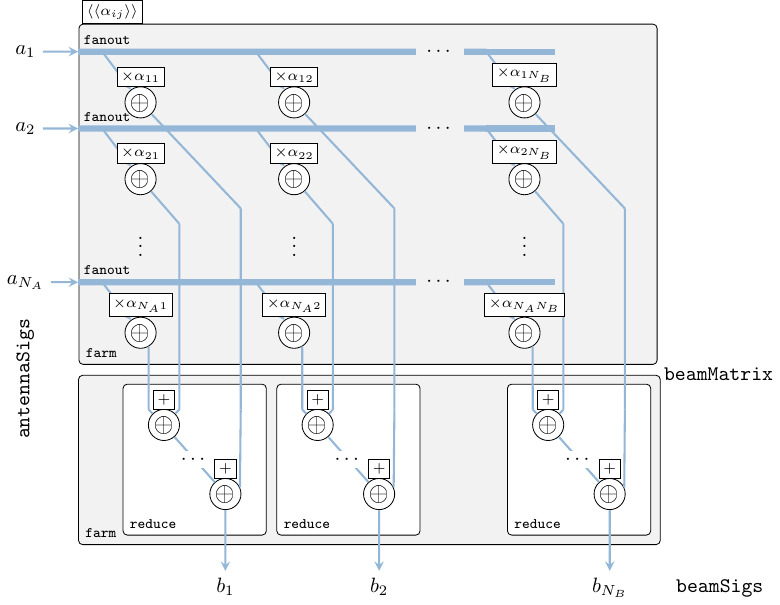

The DBF receives complex in data, from antenna elements and forms simultaneous receiver beams, or “listening directions”, by summing individually phase-shifted in data signals from all elements. Considering the indata video cube, the transformation applied by DBF, could be depicted as in Figure 4.

Considering the application specification in section 1.Application Specification on the input data, namely “for each antenna the data arrives pulse by pulse, and each pulse arrives range bin by range bin”, we can assume that the video is received as Antenna (Window (Range a)) cubes, meaning that the inner vectors are the range bins. However, Figure 4 shows that the beamforming function is applied in the antenna direction, so we need to transpose the cube in such a way that Antenna becomes the inner vector, i.e. Window (Range (Antenna a)). We thus describe the DBF stage as a combinational SY process comb acting upon signals of Cubes, namely mapping the beamforming function on each column of each pulse matrix (see Figure 4).

dbf :: Signal (Antenna (Window (Range CpxData)))

-> Signal (Window (Range (Beam CpxData)))

dbf = SY.comb11 (M.farm11 fDBF . C.transpose)The beamforming function is specified like in eq. 1, where denotes the samples from antenna elements, and respectively are samples for each beam. This is in fact a of matrix-vector multiplication, thus we implement eq. 1 at the highest level of abstraction simply as matrix/vector operations like in eq. 2.

fDBF :: Antenna CpxData -- ^ input antenna elements

-> Beam CpxData -- ^ output beams

fDBF antennaEl = beams

where

beams = V.reduce (V.farm21 (+)) beamMatrix

beamMatrix = M.farm21 (*) elMatrix beamConsts

elMatrix = V.farm11 V.fanout antennaEl

beamConsts = mkBeamConsts dElements waveLength nA nB :: Matrix CpxData| Function | Original module | Package |

|---|---|---|

farm11, reduce, length |

ForSyDe.Atom.Skel.FastVector |

forsyde-atom |

farm11, farm21 |

ForSyDe.Atom.Skel.FastVector.Matrix |

forsyde-atom |

transpose |

ForSyDe.Atom.Skel.FastVector.Cube |

forsyde-atom |

comb11 |

ForSyDe.Atom.MoC.SY |

forsyde-atom |

mkBeamConsts |

AESA.Coefs |

aesa-atom |

dElements, waveLenth, nA, nB |

AESA.Params |

aesa-atom |

In fact the operation performed by the function in eq. 1, respectively in eq. 2 is nothing else but a dot operation between a vector and a matrix. Luckily, ForSyDe.Atom.Skel.FastVector.DSP exports a utility skeleton called dotvm which instantiates exactly this type of operation. Thus we can instantiate an equivalent simply as

fDBF' antennaEl = antennaEl `dotvm` beamConsts

where

beamConsts = mkBeamConsts dElements waveLength nA nB :: Matrix CpxDataThe expanded version fDBF was given mainly for didactic purpose, to get a feeling for how to manipulate different skeletons to obtain more complex operations or systems. From now on, in this section we will only use the library-provided operations, and we will study them in-depth only later in section 6.

2.2.2.2 Pulse Compression (PC)

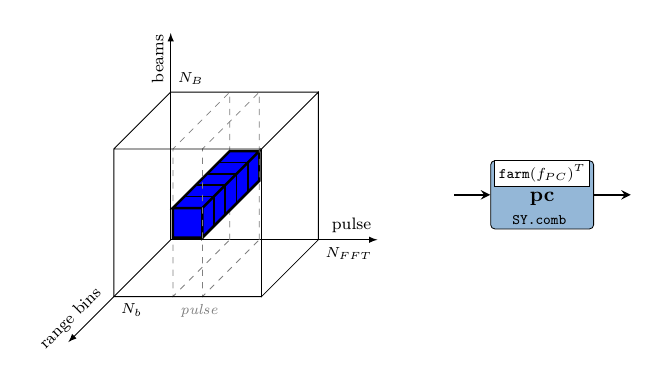

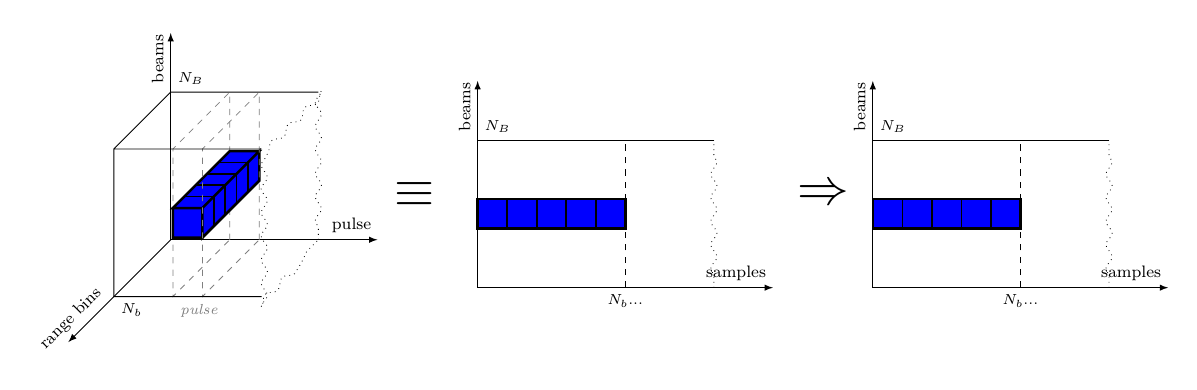

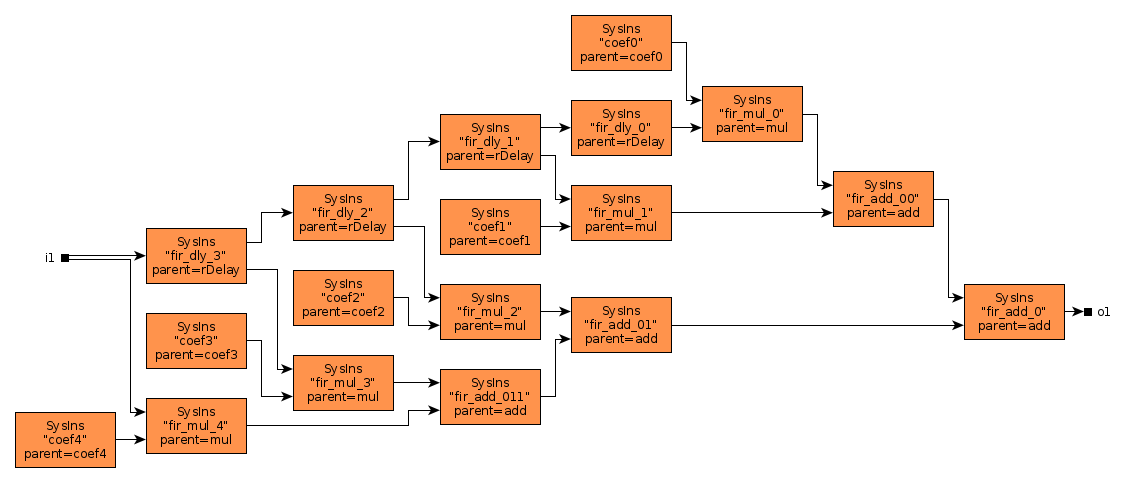

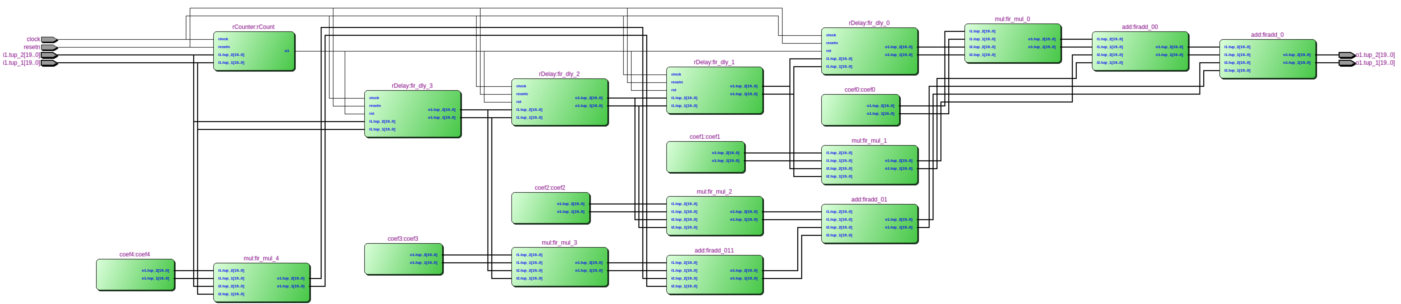

In this stage the received echo of the modulated pulse, i.e. the information contained by the range bins, is passed through a matched filter for decoding their modulation. This essentially applies a sliding window, or a moving average on the range bin samples. Considering the video cube, the PC transformation is applied in the direction shown in Figure 6, i.e. on vectors formed from the range of every pulse.

The PC process is mapping the on each row of the pulse matrices in a cube, however the previous stage has arranged the cube to be aligned beam-wise. This is why we need to re-arrange the data so that the innermost vectors are Ranges instead, and we do this by simply transpose-ing the inner Range Beam matrices into Beam Range ones.

pc :: Signal (Window (Range (Beam CpxData)))

-> Signal (Window (Beam (Range CpxData)))

pc = SY.comb11 (V.farm11 (V.farm11 fPC . M.transpose))

-- ^ == (M.farm11 fPC . "<a href='https://forsyde.github.io/forsyde-atom/api/ForSyDe-Atom-Skel-FastVector.html'>"V.farm11"" M.transpose)Here the function applies the fir skeleton on these vectors (which computes a moving average if considering vectors). The fir skeleton is a utility formulated in terms of primitive skeletons (i.e. farm and reduce) on numbers, i.e. lifting arithmetic functions. We will study this skeleton later in this report and for now we take it “for granted”, as conveniently provided by the DSP utility library. For this application we also use a relatively small average window (5 taps).

fPC :: Range CpxData -- ^ input range bin

-> Range CpxData -- ^ output pulse-compressed bin

fPC = fir (mkPcCoefs pcTap)| Function | Original module | Package |

|---|---|---|

comb11 |

ForSyDe.Atom.MoC.SY |

forsyde-atom |

farm11 |

ForSyDe.Atom.Skel.FastVector |

forsyde-atom |

farm11, transpose |

ForSyDe.Atom.Skel.FastVector.Matrix |

forsyde-atom |

fir |

ForSyDe.Atom.Skel.FastVector.DSP |

forsyde-atom |

mkPcCoefs |

AESA.Coefs |

aesa-atom |

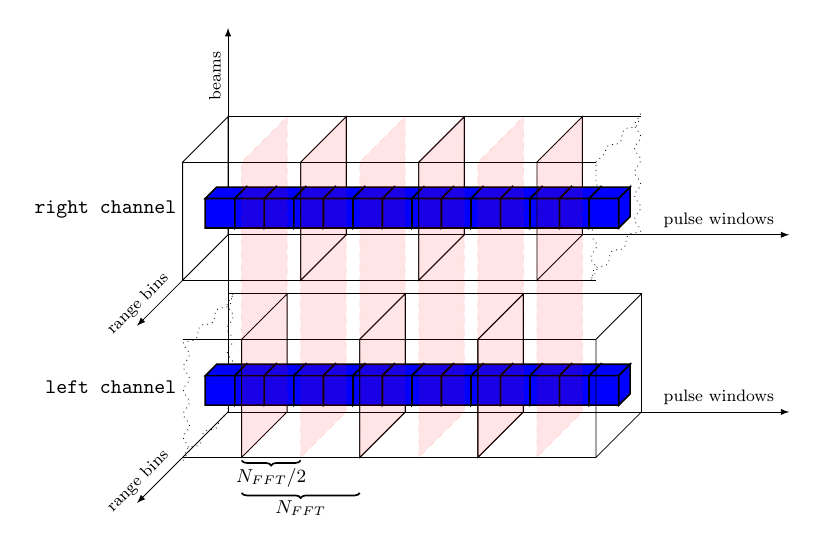

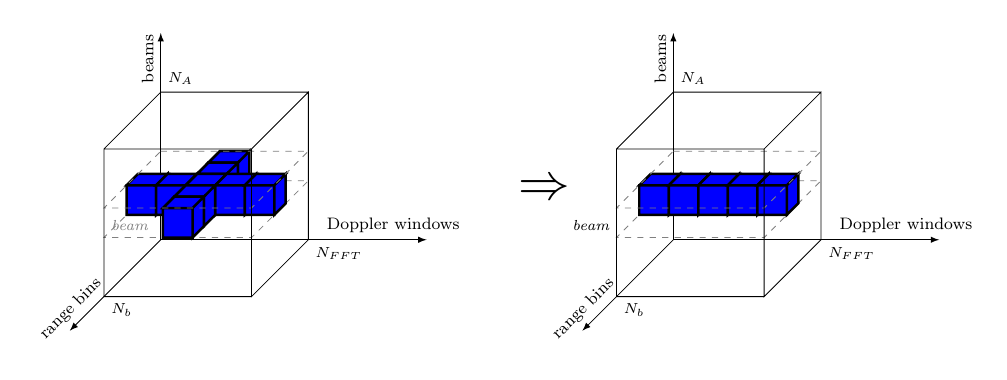

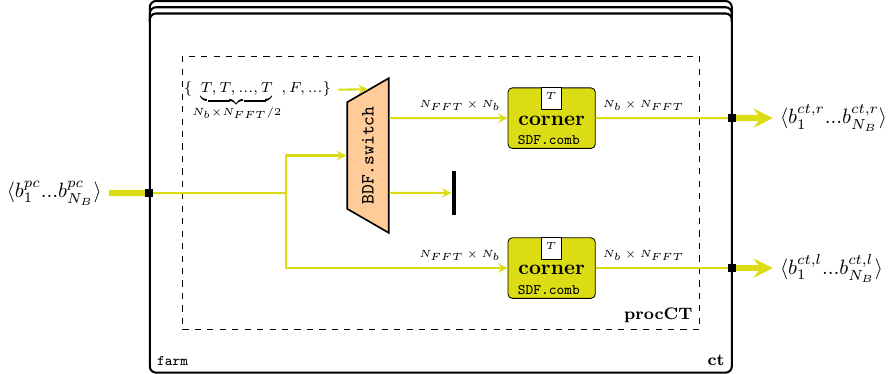

2.2.2.3 Corner Turn (CT) with 50% overlapping data

During the CT a rearrangement of data must be performed between functions that process data in “different” directions, e.g. range and pulse, in order to be able to calculate the Doppler channels further in the processing pipeline. The process of corner turning becomes highly relevant when detailed time behavior of the application is being derived or inferred, since it demands well-planned design decisions to make full use of the underlying architecture. At the level of abstraction on which we work right now though, it is merely a matrix transpose operation, and it can very well be postponed until the beginning of the next stage. However, a much more interesting operation is depicted in Figure 7: in order to maximize the efficiency of the AESA processing the datapath is split into two concurrent processing channels with 50% overlapped data.

Implementing such a behavior requires a bit of “ForSyDe thinking”. At a first glance, the problem seems easily solved considering only the cube structures: just “ignore” half of the first cube of the right channel, while the left channel replicates the input. However, there are some timing issues with this setup: from the left channel’s perspective, the right channel is in fact “peaking into the future”, which is an abnormal behavior. Without going too much into details, you need to understand that any type of signal “cleaning”, like dropping or filtering out events, can cause serious causality issues in a generic process network, and thus it is illegal in ForSyDe system modeling. On the other hand we could delay the left channel in a deterministic manner by assuming a well-defined initial state (e.g. all zeroes) while it waits for the right channel to consume and process its first half of data. This defines the history of a system where all components start from time zero and eliminates any source of “clairvoyant”/ambiguous behavior.

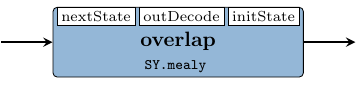

To keep things simple, we stay within the same time domain, keeping the perfect synchrony assumption, and instantiating the left channel building mechanism as a simple Mealy finite state machine. This machine splits an input cube into two halves, stores one half and merges the other half with the previously stored state to create the left channel stream of cubes.

overlap :: Signal (Window (Beam (Range CpxData)))

-> Signal (Window (Beam (Range CpxData)))

overlap = SY.mealy11 nextState outDecode initState

where

nextState _ cube = V.drop (nFFT `div` 2) cube

outDecode s cube = s <++> V.take (nFFT `div` 2) cube

initState = (V.fanoutn (nFFT `div` 2) . V.fanoutn nB . V.fanoutn nb) 0| Function | Original module | Package |

|---|---|---|

mealy11 |

ForSyDe.Atom.MoC.SY |

forsyde-atom |

drop, take, fanoutn, (<++>) |

ForSyDe.Atom.Skel.FastVector |

forsyde-atom |

nFFT, nA, nB |

AESA.Params |

aesa-atom |

OBS! Perhaps considering all zeroes for the initial state might not be the best design decision, since that is in fact “junk data” which is propagated throughout the system and which alters the expected behavior. A much safer (and semantically correct) approach would be to model the initial state using absent events instead of arbitrary data. However this demands the introduction of a new layer and some quite advanced modeling concepts which are out of the scope of this report2. For the sake of simplicity we now consider that the initial state is half a cube of zeroes and that there are no absent events in the system. As earlier mentioned, it is illegal to assume any type of signal cleaning during system modeling, however this law does not apply to the observer (i.e. the testbench), who is free to take into consideration whichever parts of the signals it deems necessary. We will abuse this knowledge in order to show a realistic output behavior of the AESA signal processing system: as “observers”, we will ignore the effects of the initial state propagation from the output signal and instead plot only the useful data.

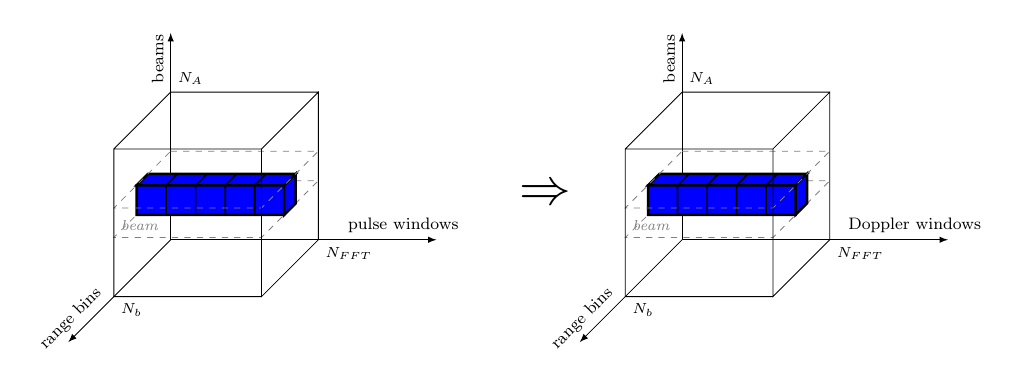

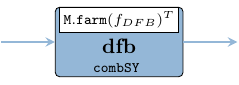

2.2.2.4 Doppler Filter Bank (DFB)

During the Doppler filter bank, every window of samples, associated with each range bin is transformed into a Doppler channel and the complex samples are converted to real numbers by calculating their envelope.

The dfb process applies the the following chain of functions on each window of complex samples, in three consecutive steps:

scale the window samples with a set of coefficients to decrease the Doppler side lobes from each FFT output and thereby to increase the clutter rejection.

apply an -point 2-radix decimation in frequency Fast Fourier Transform (FFT) algorithm.

compute the envelope of each complex sample when phase information is no longer of interest. The envelope is obtained by calculating the absolute value of the complex number, converting it into a real number.

| Function | Original module | Package |

|---|---|---|

farm11,farm21 |

ForSyDe.Atom.Skel.FastVector |

forsyde-atom |

fft |

ForSyDe.Atom.Skel.FastVector.DSP |

forsyde-atom |

mkWeightCoefs |

AESA.Coefs |

aesa-atom |

nS, nFFT |

AESA.Params |

aesa-atom |

dfb :: Signal (Window (Beam (Range CpxData )))

-> Signal (Beam (Range (Window RealData)))

dfb = SY.comb11 (M.farm11 fDFB . C.transpose)

fDFB :: Window CpxData -> Window RealData

fDFB = V.farm11 envelope . fft nS . weight

where

weight = V.farm21 (*) (mkWeightCoefs nFFT)

envelope a = let (i, q) = (realPart a, imagPart a)

in sqrt (i * i + q * q)2.2.2.5 Constant False Alarm Ratio (CFAR)

The CFAR normalizes the data within the video cubes in order to maintain a constant false alarm rate with respect to a detection threshold. This is done in order to keep the number of false targets at an acceptable level by adapting the normalization to the clutter situation in the area (around a cell under test) of interest. The described process can be depicted as in Figure 11 which suggests the stencil data accessing pattern within the video cubes.

cfar :: Signal (Beam (Range (Window RealData)))

-> Signal (Beam (Range (Window RealData)))

cfar = SY.comb11 (V.farm11 fCFAR)

The cfar process applies the function on every matrix corresponding to each beam. The function normalizes each Doppler window, after which the sensitivity will be adapted to the clutter situation in current area, as seen in Figure 13. The blue line indicates the mean value of maximum of the left and right reference bins, which means that for each Doppler sample, a swipe of neighbouring bins is necessary, as suggested by Figure 11. This is a typical pattern in signal processing called stencil, which will constitute the main parallel skeleton within the function.

fCFAR :: Range (Window RealData) -> Range (Window RealData)

fCFAR rbins = V.farm41 (\m -> V.farm31 (normCfa m)) md rbins lmv emv

where

md = V.farm11 (logBase 2 . V.reduce min) rbins

emv = (V.fanoutn (nFFT + 1) dummy) <++> (V.farm11 aritMean neighbors)

lmv = (V.drop 2 $ V.farm11 aritMean neighbors) <++> (V.fanout dummy)

-----------------------------------------------

normCfa m a l e = 2 ** (5 + logBase 2 a - maximum [l,e,m])

aritMean :: Vector (Vector RealData) -> Vector RealData

aritMean = V.farm11 (/n) . V.reduce addV . V.farm11 geomMean . V.group 4

geomMean = V.farm11 (logBase 2 . (/4)) . V.reduce addV

-----------------------------------------------

dummy = V.fanoutn nFFT (-maxFloat)

neighbors = V.stencil nFFT rbins

-----------------------------------------------

addV = V.farm21 (+)

n = fromIntegral nFFT| Function | Original module | Package |

|---|---|---|

farm[4/3/1]1, reduce, <++>, |

ForSyDe.Atom.Skel.FastVector |

forsyde-atom |

drop, fanout, fanoutn, stencil |

||

comb11 |

ForSyDe.Atom.MoC.SY |

forsyde-atom |

maxFloat |

AESA.Coefs | aesa-atom |

nb, nFFT |

AESA.Params |

aesa-atom |

The function itself can be described with the system of eq. 3, where

is the minimum value over all Doppler channels in a batch for a specific data channel and range bin.

and calculate the early and respectively late mean values from the neighboring range bins as a combination of geometric and arithmetic mean values.

and are the earliest bin, respectively latest bin for which the CFAR can be calculated as and require at least bins + 1 guard bin before and respectively after the current bin. This phenomenon is also called the “stencil halo”, which means that CFAR, as defined in eq. 3 is applied only on bins.

bins earlier than , respectively later than , are ignored by the CFAR formula and therefore their respective EMV and LMV are replaced with the lowest representable value.

5 is added to the exponent of the CFAR equation to set the gain to 32 (i.e. with only noise in the incoming video the output values will be 32).

The first thing we calculate is the for each Doppler window (row). For each row of rbins (i.e. range bins of Doppler windows) we look for the minimum value (reduce min) and apply the binary logarithm on it.

Another action performed over the matrix rbins is to form two stencil “cubes” for EMV and LMV respectively, by gathering batches of Doppler windows like in eq. 4, computing them like in eq. 5.

Each one of these neighbors matrices will constitute the input data for calculating the and for each Doppler window. and are calculated by applying the mean function arithMean over them, as shown (only for the window associated with the bin) in eq. 5. The resulting emv and lmv matrices are padded with rows of the minimum representable value -maxFloat, so that they align properly with rbins in order to combine into the 2D farm/stencil defined at eq. 3. Finally, fCFAR yields a matrix of normalized Doppler windows. The resulting matrices are not transformed back into sample streams by the parent process, but rather they are passed as single tokens downstream to the INT stage, where they will be processed as such.

2.2.2.6 Integrator (INT)

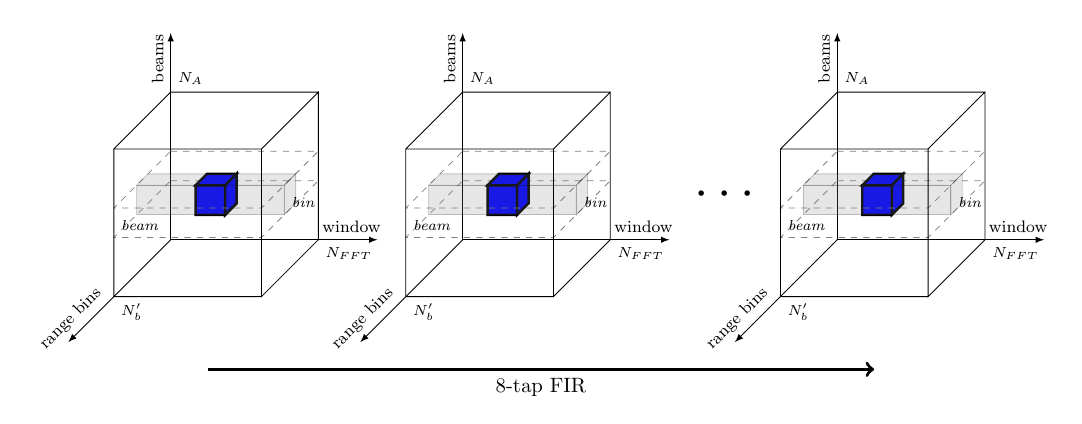

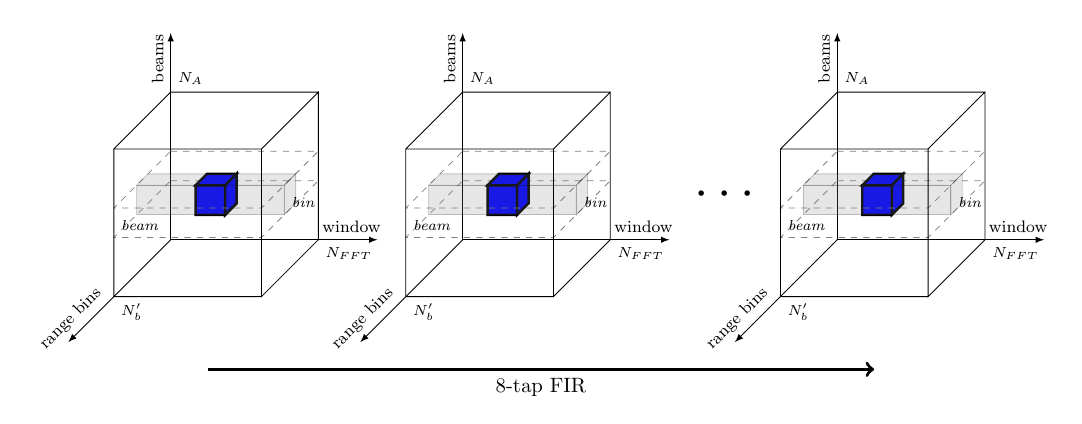

During the last stage of the video processing chain each data sample of the video cube is integrated against its 8 previous values using an 8-tap FIR filter, as suggested by the drawing in Figure 14.

int :: Signal (Beam (Range (Window RealData)))

-> Signal (Beam (Range (Window RealData)))

-> Signal (Beam (Range (Window RealData)))

int right left = firNet mkIntCoefs $ SY.interleave2 right leftBefore integrating though, the data from both the left and the right channel need to be merged and interleaved. This is done by the process interleave below, which is a convenient utility exported by the SY library, hiding a domain interface. When considering only the data structures, the interleave process can be regarded as an up-sampler with the rate 2/1. When taking into consideration the size of the entire data set (i.e. token rates structure sizes data size), we can easily see that the overall required system bandwidth (ratio) remains the same between the PC and INT stages, i.e. .

| Function | Original module | Package |

|---|---|---|

farm21,farm11,fanout |

ForSyDe.Atom.Skel.FastVector.Cube |

forsyde-atom |

fir' |

ForSyDe.Atom.Skel.FastVector.DSP |

forsyde-atom |

comb21,comb11, delay, interleave2 |

ForSyDe.Atom.MoC.SY |

forsyde-atom |

mkFirCoefs |

AESA.Coefs | aesa-atom |

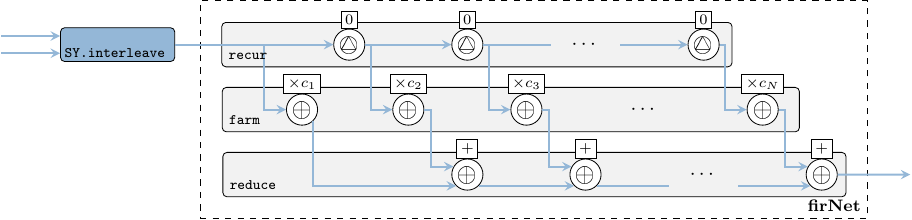

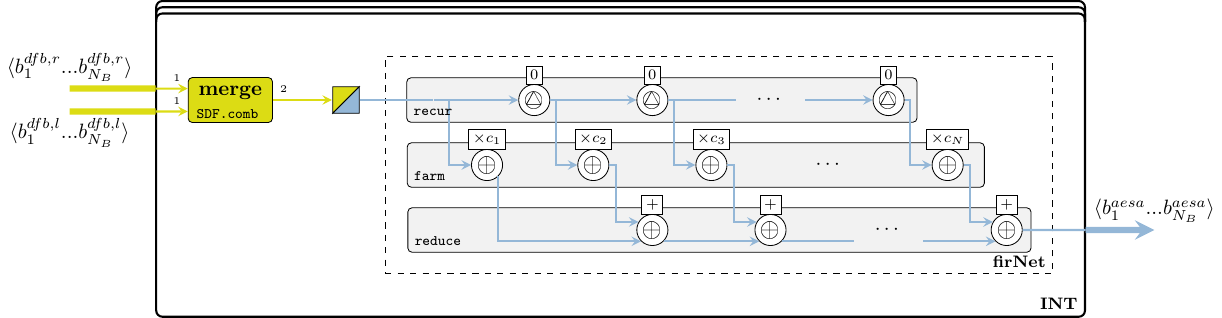

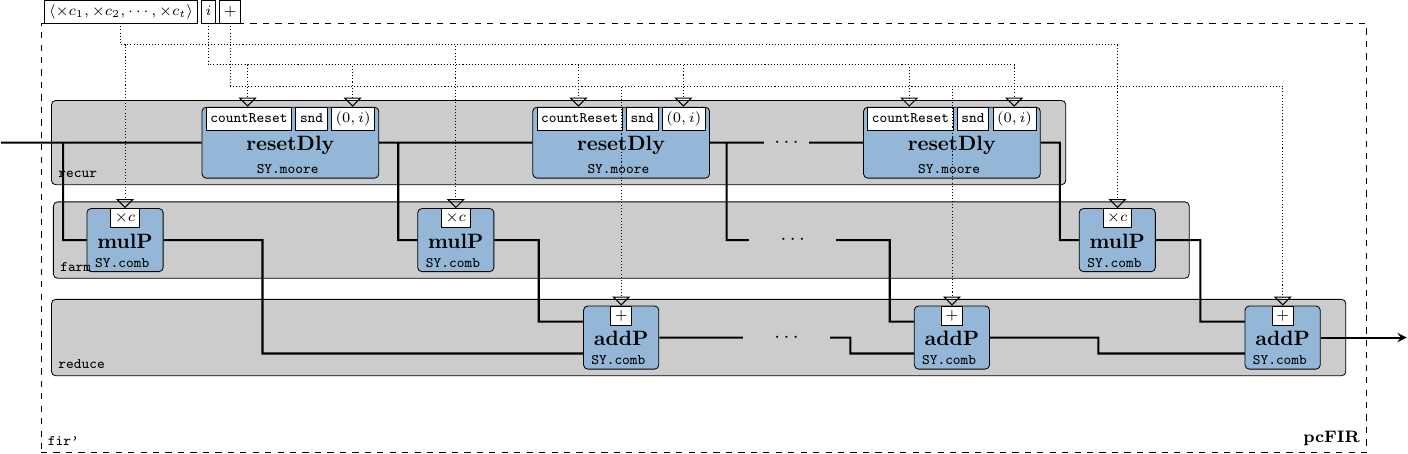

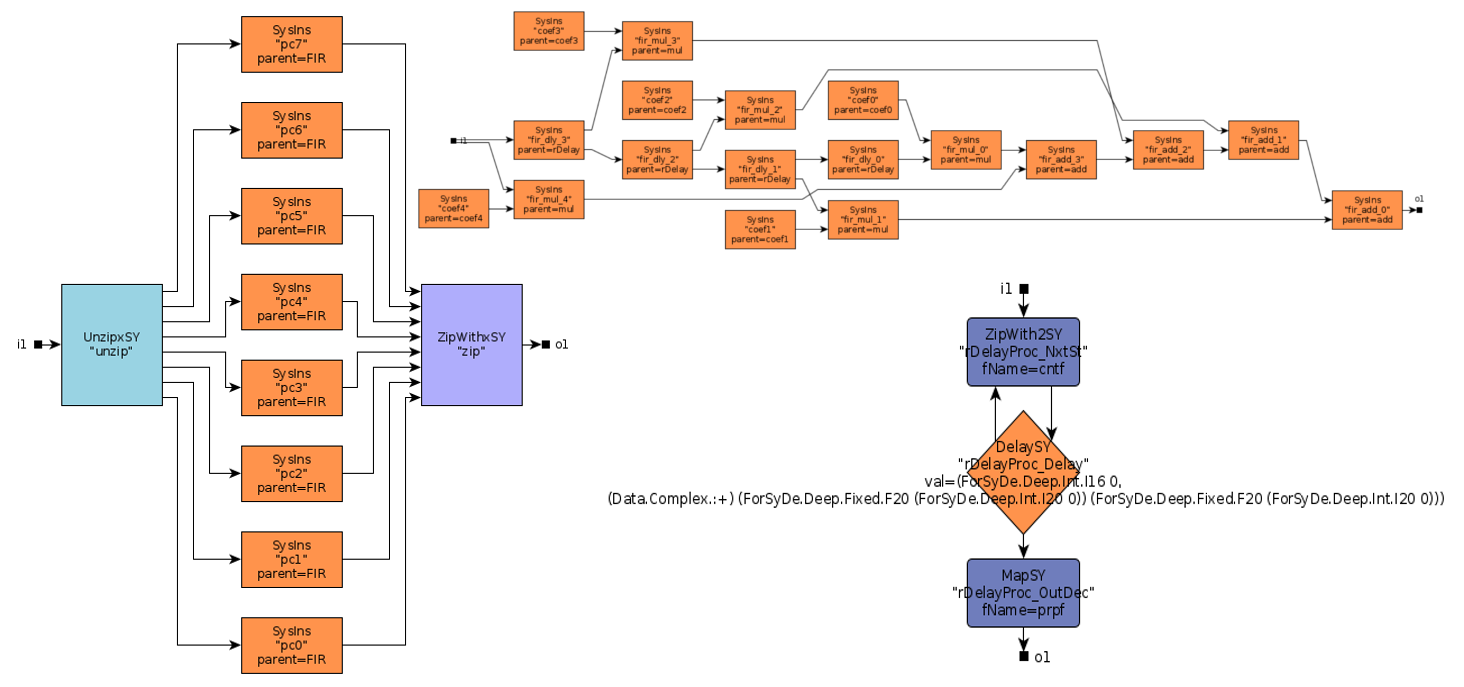

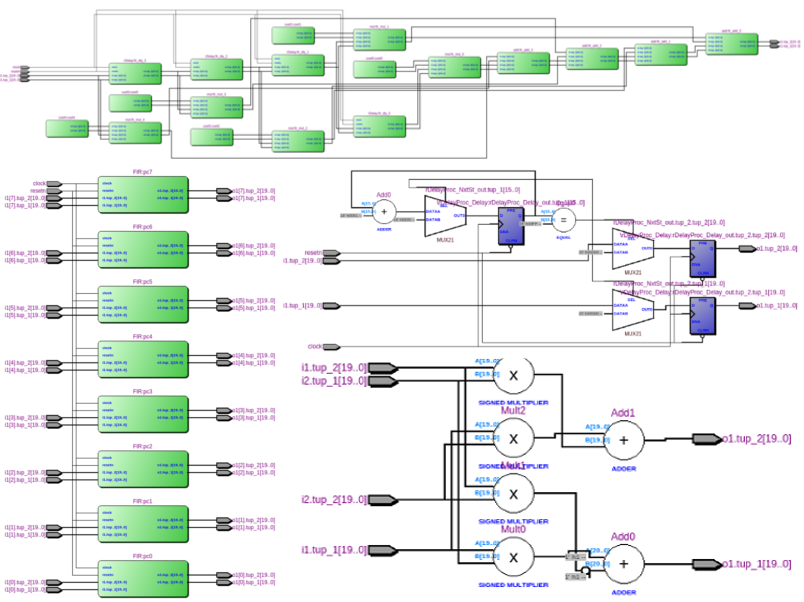

The 8-tap FIR filter used for integration is also a moving average, but as compared to the fir function used in section 2.2.2.2, the window slides in time domain, i.e. over streaming samples rather than over vector elements. To instantiate a FIR system we use the fir' skeleton provided by the ForSyDe-Atom DSP utility libraries, which constructs the the well-recognizable FIR pattern in Figure 15, i.e. a recur-farm-reduce composition. In order to do so, fir' needs to know what to fill this template with, thus we need to provide as arguments its “basic” operations, which in our case are processes operating on signals of matrices. In fact, fir itself is a specialization of the fir' skeleton, which defines its basic operations as corresponding functions on vectors. This feature derives from a powerful algebra of skeletons which grants them both modularity, and the possibility to transform them into semantically-equivalent forms, as we shall soon explore in section 7.

firNet :: Num a => Vector a -> SY.Signal (Cube a) -> SY.Signal (Cube a)

firNet coefs = fir' addSC mulSC dlySC coefs

where

addSC = SY.comb21 (C.farm21 (+))

mulSC c = SY.comb11 (C.farm11 (*c))

dlySC = SY.delay (C.fanout 0)2.2.3 System Process Network

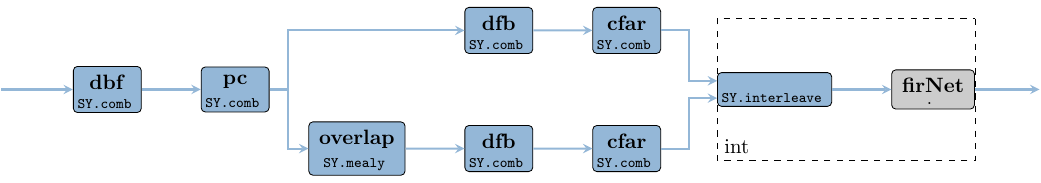

The AESA process network is formed by “plugging in” together all components instantiated in the previous sections, and thus obtaining the system description in Figure 16. We do not transpose the output data, because the Doppler windows are the ones we are interested in plotting as the innermost structures.

aesa :: Signal (Antenna (Window (Range CpxData )))

-> Signal (Beam (Range (Window RealData)))

aesa video = int rCfar lCfar

where

rCfar = cfar $ dfb oPc

lCfar = cfar $ dfb $ overlap oPc

oPc = pc $ dbf video2.2.4 System Parameters

Here we define the size constants, for a simple test scenario. The size nA can be inferred from the size of input data and the vector operations.

module AESA.Params where

nA = 16 :: Int

nB = 8 :: Int

nb = 1024 :: Int

nFFT = 256 :: Int

nS = 8 :: Int -- 2^nS = nFFT; used for convenience

pcTap = 5 :: Int -- number of FIR taps in the PC stage

freqRadar = 10e9 :: Float -- 10 Ghz X-band

waveLength = 3e8 / freqRadar

dElements = waveLength / 2

fSampling = 3e6 :: Float

pulseWidth = 1e-6 :: Float2.2.5 Coefficient Generators

Here we define the vectors of coefficients used throughout the AESA design. We keep this module as independent as possible from the main design and export the coefficients both as ForSyDe-Atom vectors but also as Haskell native lists, so that other packages can make use of them, without importing the whole ForSyDe-Atom chain.

import ForSyDe.Atom.Skel.FastVector as V

import ForSyDe.Atom.Skel.FastVector.Matrix as M

import ForSyDe.Atom.Skel.FastVector.DSP

import Data.ComplexThe mkBeamConst generator creates a matrix of beam constants used in the digital beamforming stage section 6.1.2.1. These beam constants perform both phase shift and tapering according to eq. 6, where performs tapering and perform phase shifting. For tapering we use a set of Taylor coefficients generated with our in-house utility taylor. The phase shift shall be calculated according to eq. 7, where is the distance between the antenna elements. is the angle between the wave front of the current beam and normal of the antenna elements and is the wavelength of the pulse.

mkBeamConsts :: RealFloat a

=> a -- ^ distance between radar elements

-> a -- ^ radar signal wavelength

-> Int -- ^ Number of antenna elements

-> Int -- ^ Number of resulting beams

-> Matrix (Complex a)

mkBeamConsts d lambda nA nB = M.farm21 mulScale taperingCf phaseShiftCf

where

-- all coefficients are normalized, i.e. scaled with 1/nA'

mulScale x y = x * y / nA'

-- tapering coefficients, c_k in Eq. (4)

taperingCf = V.farm11 (V.fanoutn nB) taylorCf

-- phase shift coefficients, e^(j*phi_kl) in Eqs.(4) and (5)

phaseShiftCf = V.farm11 (\k -> V.farm11 (mkCf k) thetas) antennaIxs

mkCf k theta_l = cis $ (k - 9.5) * 2 * pi * d * sin theta_l / lambda

--------------

-- Taylor series: nA real numbers; 4 nearly constant adjacent side lobes;

-- peak sidelobe level of -30dB

taylorCf = taylor nA 4 (-30)

-- theta_l spanning nB angles from 0 to pi

thetas = V.farm11 (\t -> pi/3 + t * (pi - 2*pi/3)/(nB'-1)) beamIxs

--------------

nA' = fromIntegral nA

nB' = fromIntegral nB

antennaIxs = vector $ map realToFrac [0..nA-1]

beamIxs = vector $ map realToFrac [0..nB-1]-- Can be used without importing the ForSyDe.Atom libraries.

mkBeamConsts' :: RealFloat a => a -> a-> Int -> Int -> [[Complex a]]

mkBeamConsts' d l nA nB = map fromVector $ fromVector $ mkBeamConsts d l nA nB| Function | Original module | Package |

|---|---|---|

farm11,farm21, fanout, (from-)vector |

ForSyDe.Atom.Skel.FastVector |

forsyde-atom |

taylor |

ForSyDe.Atom.Skel.FastVector.DSP |

forsyde-atom |

The mkPcCoefs generator for the FIR filter in section 6.1.2.2 is simply a -tap Hanning window. It can be changed according to the user requirements. All coefficients are scaled with so that the output does not overflow a possible fixed point representation.

mkPcCoefs :: Fractional a => Int -> Vector a

mkPcCoefs n = V.farm11 (\a -> realToFrac a / realToFrac n) $ hanning n| Function | Original module | Package |

|---|---|---|

hanning |

ForSyDe.Atom.Skel.FastVector.DSP |

forsyde-atom |

-- Can be used without importing the ForSyDe.Atom libraries.

mkPcCoefs' :: Fractional a => Int -> [a]

mkPcCoefs' n = fromVector $ mkPcCoefs nWe use also a Hanning window to generate the complex weight coefficients for decreasing the Doppler side lobes during DFB in section 2.2.2.4. This can be changed according to the user requirements.

mkWeightCoefs :: Fractional a => Int -> Vector a

mkWeightCoefs nFFT = V.farm11 realToFrac $ hanning nFFT-- Can be used without importing the ForSyDe.Atom libraries.

mkWeightCoefs' :: Fractional a => Int -> [a]

mkWeightCoefs' nFFT = fromVector $ mkWeightCoefs nFFTFor the integrator FIR in section 6.1.2.5 we use a normalized square window.

-- Can be used without importing the ForSyDe.Atom libraries.

mkIntCoefs' :: Fractional a => [a]

mkIntCoefs' = [1/8,1/8,1/8,1/8,1/8,1/8,1/8,1/8] -- The maximum floating point number representable in Haskell.

maxFloat :: Float

maxFloat = x / 256

where n = floatDigits x

b = floatRadix x

(_, u) = floatRange x

x = encodeFloat (b^n - 1) (u - n)2.3 Model Simulation Against Test Data

As a first trial to validate that our AESA high-level model is “sane”, i.e. is modeling the expected behavior, we test it against realistic input data from an array of antennas detecting some known objects. For this we have provided a set of data generator and plotter scripts, along with an executable binary created with the CubesAtom module presented in section 2.2. Please read the project’s README file on how to compile and run the necessary software tools.

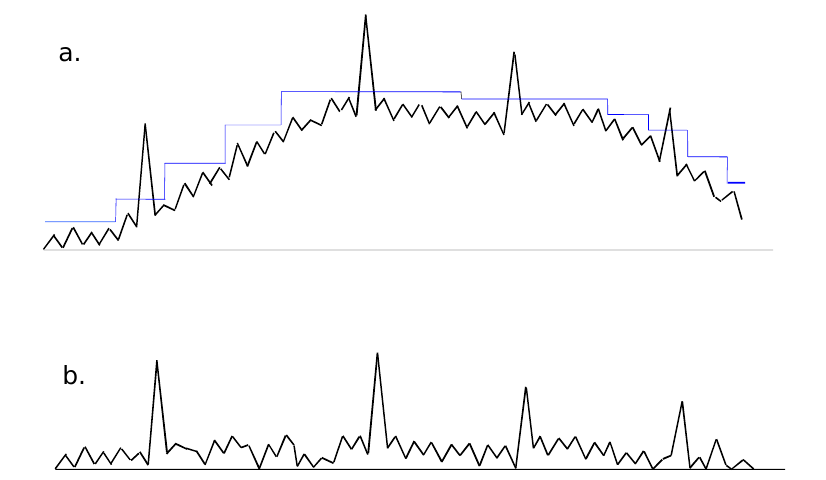

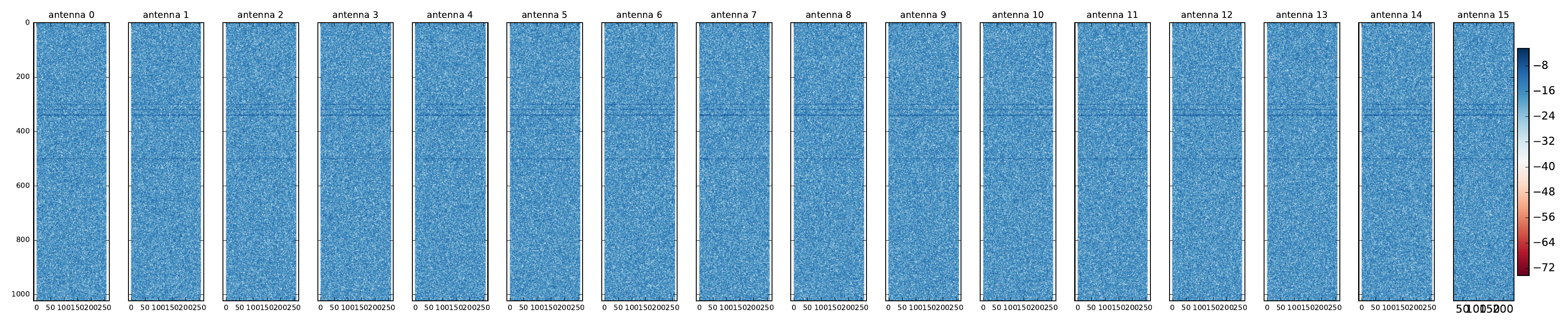

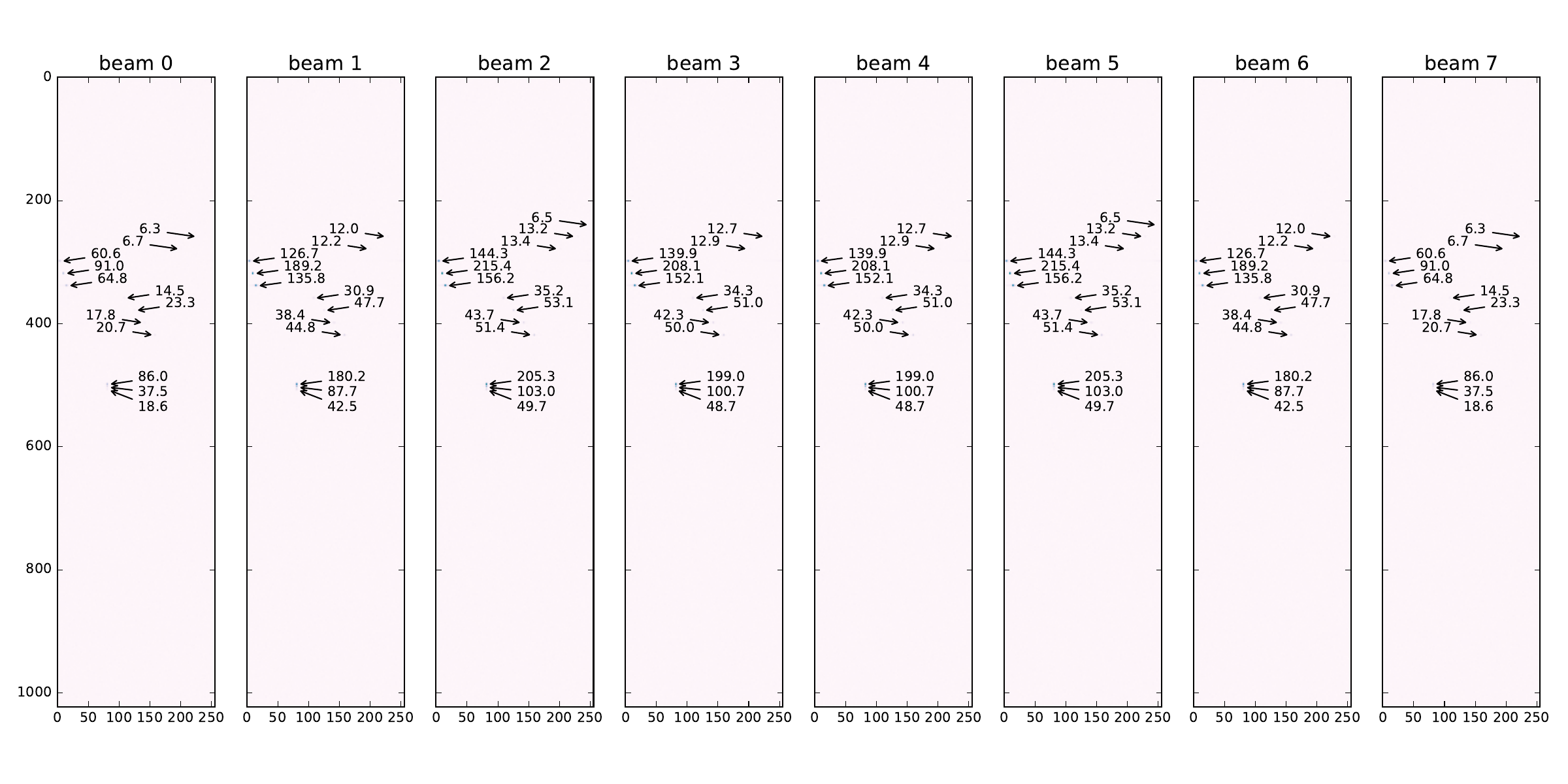

The input data generator script replicates a situation in which 13 distinct objects, either near each other or far apart, as seen in tbl. 2, are detected drowned into -18dB worth of noise by the 16 AESA antenna elements (see section 2.2.AESA parameters). In in Figure 17 (a) can be seen a plot with the absolute values of the complex samples in the first video indata cube, comprising of 16 antenna (pulse range) matrices.

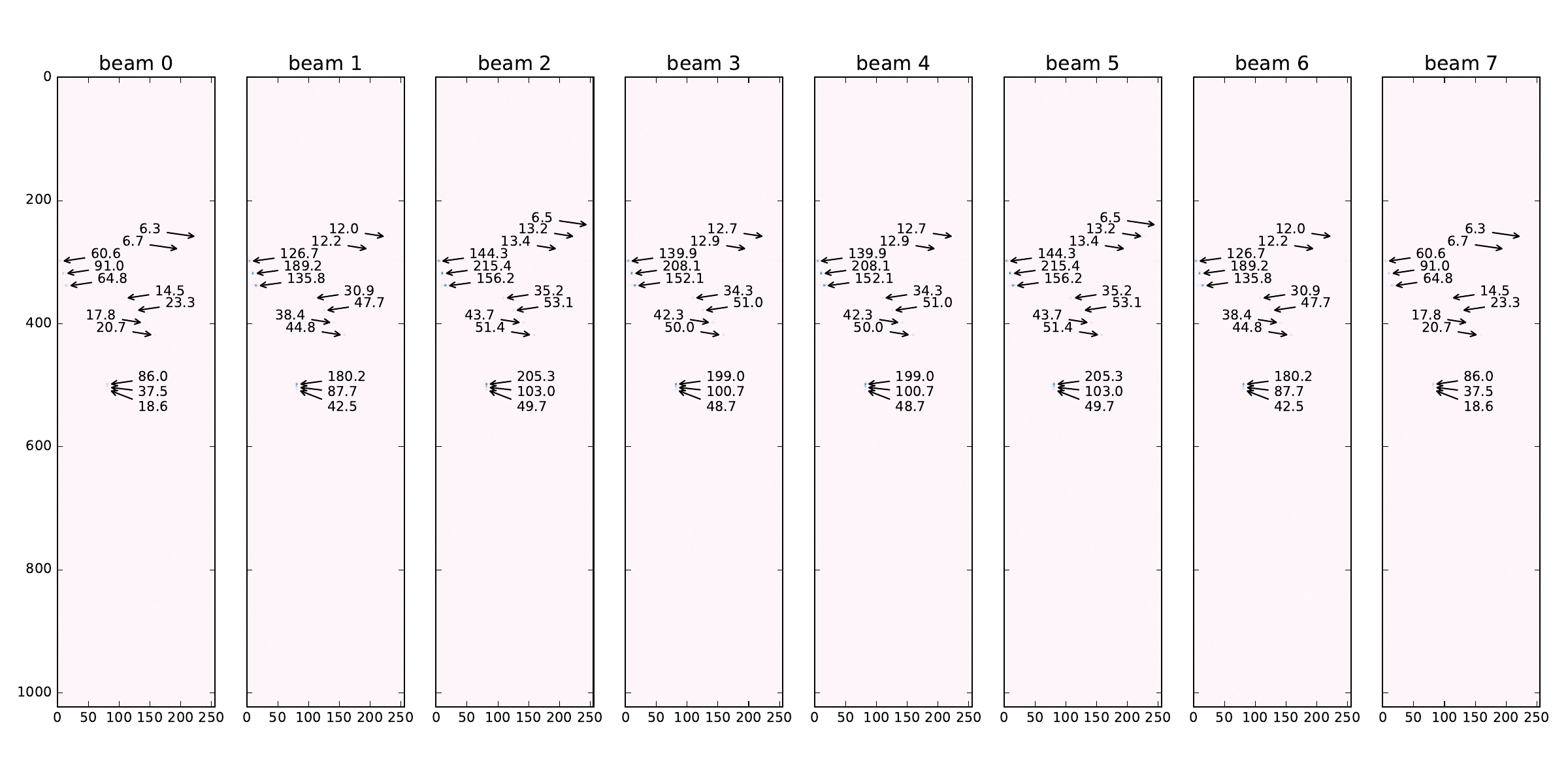

In Figure 17 (b) a cube consisting of 8 beam (Doppler range) matrices from the AESA signal processing output is shown. We can see the 13 objects detected with different intensities across the 8 beams. As they are all positioned at approximately the same angle relative to the antenna (i.e. ) we can see the maximum correlation values are reflected in beam 2.

| # | Distance (m) | Angle () | Rel. Speed (m/s) | Rel. Power |

|---|---|---|---|---|

| 1 | 12e3 | 0.94 | -6 | |

| 2 | 13e3 | 5 0.94 | -6 | |

| 3 | 14e3 | 10 0.94 | -6 | |

| 4 | 15e3 | -0.94 | -2 | |

| 5 | 16e3 | -2 0.94 | -2 | |

| 6 | 17e3 | -3 0.94 | -2 | |

| 7 | 18e3 | -20 0.94 | -4 | |

| 8 | 19e3 | -23 0.94 | -4 | |

| 9 | 20e3 | -26 0.94 | -4 | |

| 10 | 21e3 | -29 0.94 | -4 | |

| 11 | 25e3 | -15 0.94 | -2 | |

| 12 | 25.4e3 | -15 0.94 | -4 | |

| 13 | 25.2e3 | -15 0.94 | -3 |

Figure 17: AESA data plots. a — Absolute values for one input video cube with antenna data, b — One output cube with radar data

2.4 Conclusion

In this section we have shown how to write a fully-functional high-level model of an AESA radar signal processing chain in ForSyDe-Atom. In the process we have exemplified basic modeling concepts such as layers, process constructors and skeletons. For simplicity we have employed only two widely-used layers, representing time (through the MoC layer) and parallelism (through the Skeleton layer) aspects in a system. Within the MoC layer we used only the SY MoC capturing the passage of data (structures) in a synchronous pipeline fashion. Within the Skeleton layer we used algorithmic skeletons on vector data types to capture the inherent parallel interaction between elements, mainly to build algorithms on cubes. As of Figure 3 from section 2.1, this layer setup is represented by right picture (processes of skeleton functions). However in section 2.2.2.6 we have given “an appetizer” on how to instantiate regular, parameterizable process network structures using the same skeletons, thanks to the concept of layers.

In section 6 we transform this model to capture some more refined notions of time and data passage, as well as more complex use of skeletons and patterns, gradually reaching enough system details to start considering the synthesis of the model in section 7 to a hardware platform. Until then we will introduce an alternative modeling framework, as well as practical tools to verify the conformance of the ForSyDe model and all its subsequent refinements to the given specification.

3 Alternative Modeling Framework: ForSyDe-Shallow

This section follows step-by-step the same approach as section 2, but this time using the ForSyDe-Shallow modeling framework. The purpose is to familiarize the reader to the syntax of ForSyDe-Shallow should the designer prefer it instead of ForSyDe-Atom. This section is also meant to show that, except for minor syntactic differences, the same modeling concepts are holding and the user experience and API design are very similar.

| Package | aesa-shallow-0.1.0 | path: ./aesa-shallow/README.md |

| Deps | forsyde-shallow-0.2.2 | url:http://hackage.haskell.org/package/forsyde-shallow |

| forsyde-shallow-extensions-0.1.1 | path: ./forsyde-shallow-extensions/README.md |

|

| aesa-atom-0.1.1 | path: ./aesa-atom/README.md |

|

| Bin | aesa-shallow | usage: aesa-shallow --help |

ForSyDe-Shallow is the flagship and the oldest modeling language of the ForSyDe methodology (Sander and Jantsch 2004). It is a domain specific language (DSL) shallow-embedded into the functional programming language Haskell and uses the host’s type system, lazy evaluation mechanisms and the concept of higher-order functions to describe the formal modeling framework defined by ForSyDe. At the moment of writing this report, ForSyDe-Shallow is the more “mature” counterpart of ForSyDe-Atom. Although some practical modeling concepts such as layers, patterns and skeletons have been originally developed within ForSyDe-Atom, they are now well-supported by ForSyDe-Shallow as well. In the future the modeling frameworks such as ForSyDe-Atom and ForSyDe-Shallow are planned to be merged into a single one incorporating the main language features of each one and having a similar user experience as ForSyDe-Shallow.

3.1 The High-Level Model

The behavioral model of this section is exactly the same as the one presented in in section 2.2, and thus we will not go through all the details of each functional block, but rather list the code and point out the syntax differences.

The code for this section is written in the following module, see section 1.2 on how to use it:

The code for this section is written in the following module, see section 1.2 on how to use it:

3.1.1 Imported Libraries

The first main difference between ForSyDe-Shallow and ForSyDe-Atom becomes apparent when importing the libraries: ForSyDe-Shallow does not require to import as many sub-modules as its younger counterpart. This is because the main library ForSyDe.Shallow exports all the main language constructs, except for specialized utility blocks, so the user does not need to know where each function is placed.

For the AESA model we make use of such utilities, such as -dimensional vectors (i.e. matrices, cubes) and DSP blocks, thus we import our local extended ForSyDe.Shallow.Utilities library.

-- | explicit import from extensions package. Will be imported

-- normally once the extensions are merged into forsyde-shallow

import "forsyde-shallow-extensions" ForSyDe.Shallow.Core.Vector

import "forsyde-shallow-extensions" ForSyDe.Shallow.UtilityFinally, we import Haskell’s Complex type, to represent complex numbers.

To keep the model consistent with the design in section 2.2 we import the same parameters and coefficient generator functions from the aesa-atom package, as presented in sections 2.2.AESA parameters, 2.2.Coefficients.

import AESA.Params

import AESA.CoefsShallow -- wraps the list functions exported by 'AESA.Coefs' into

-- 'ForSyDe.Shallow' data types.3.1.2 Type Synonyms

We use the same (local) aliases for types representing the different data structure and dimensions.

type Antenna = Vector -- length: nA

type Beam = Vector -- length: nB

type Range = Vector -- length: nb

type Window = Vector -- length: nFFT

type CpxData = Complex Float

type RealData = Float3.1.3 Video Processing Pipeline Stages

This section follows the same model as section 2.2.2 using the ForSyDe-Shallow modeling libraries. The digital beamforming (DBF) block presented in section 2.2.2.1 becomes:

dbf :: Signal (Antenna (Window (Range CpxData)))

-> Signal (Window (Range (Beam CpxData)))

dbf = combSY (mapMat fDBF . transposeCube)

fDBF :: Antenna CpxData -- ^ input antenna elements

-> Beam CpxData -- ^ output beams

fDBF antennas = beams

where

beams = reduceV (zipWithV (+)) beamMatrix

beamMatrix = zipWithMat (*) elMatrix beamConsts

elMatrix = mapV (copyV nB) antennas

beamConsts = mkBeamConsts dElements waveLength nA nBThe second main difference between the syntax of ForSyDe-Shallow and ForSyDe-Atom can be noticed when using the library functions, such as process constructors: function names are not invoked with their module name (alias), but rather with a suffix denoting the type they operate on, e.g. transposeCube is the equivalent of C.transpose. Another difference is that constructors with different numbers of inputs and outputs are not differentiated by a two-number suffix, but rather by canonical name, e.g. combSY is the equivalent of SY.comb11. Lastly, you might notice that some function names are completely different. That is because ForSyDe-Shallow uses names inspired from functional programming, mainly associated with operations on lists, whereas ForSyDe-Atom tries to adopt more suggestive names with respect to the layer of each design component and its associated jargon, e.g. mapMat is the equivalent of M.farm11, or zipWithV is equivalent with V.farm21.

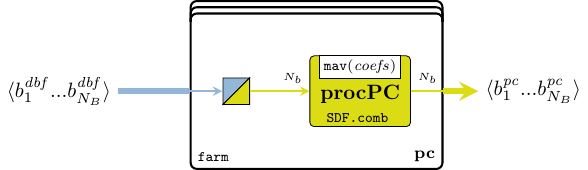

We go further with the description of the pulse compression (PC) block, as described previously in section 2.2.2.1 becomes. The same variation in naming convention can be noticed, but the syntax is still the same.

pc :: Signal (Window (Range (Beam CpxData)))

-> Signal (Window (Beam (Range CpxData)))

pc = combSY (mapV (mapV fPC . transposeMat))

fPC :: Range CpxData -- ^ input range bin

-> Range CpxData -- ^ output pulse-compressed bin

fPC = mav (mkPcCoefs 5)The same goes for overlap state machine use in the corner turn (CT) stage, as well as the Doppler filter bank (DFB) and the constant false alarm ratio (CFAR) stages from sections 2.2.2.3-2.2.2.5 respectively.

overlap :: Signal (Window (Beam (Range CpxData)))

-> Signal (Window (Beam (Range CpxData)))

overlap = mealySY nextState outDecode initState

where

nextState _ cube = dropV (nFFT `div` 2) cube

outDecode s cube = s <+> takeV (nFFT `div` 2) cube

initState = copyCube nb nB (nFFT `div` 2) 0 dfb :: Signal (Window (Beam (Range CpxData )))

-> Signal (Beam (Range (Window RealData)))

dfb = combSY (mapMat fDFB . transposeCube)

fDFB :: Window CpxData -> Window RealData

fDFB = mapV envelope . onComplexFloat (fft nFFT) . weight

where

weight = zipWithV (*) (mkWeightCoefs nFFT)

envelope a = let (i, q) = (realPart a, imagPart a)

in realToFrac $ sqrt (i * i + q * q)

onComplexFloat f = mapV (fmap realToFrac) . f . mapV (fmap realToFrac)The fft function from ForSyDe-Shallow is provided as a monomorphic utility function, and not necessarily as a skeleton. This is why, although slightly more efficient, it is not as flexible as the skeleton counterpart, and thus we need to wrap it inside our custom data type converter onComplexFloat to be able to “plug it” into our system, i.e. there are no data type mismatches.

cfar :: Signal (Beam (Range (Window RealData)))

-> Signal (Beam (Range (Window RealData)))

cfar = combSY (mapV fCFAR)

fCFAR :: Range (Window RealData) -> Range (Window RealData)

fCFAR rbins = zipWith4V (\m -> zipWith3V (normCfa m)) md rbins lmv emv

where

md = mapV (logBase 2 . reduceV min) rbins

emv = (copyV (nFFT + 1) dummy) <+> (mapV aritMean neighbors)

lmv = (dropV 2 $ mapV aritMean neighbors) <+> (copyV (nFFT*2) dummy)

-----------------------------------------------

normCfa m a l e = 2 ** (5 + logBase 2 a - maximum [l,e,m])

aritMean = mapV (/n) . reduceV addV . mapV geomMean . groupV 4

geomMean = mapV (logBase 2 . (/4)) . reduceV addV

-----------------------------------------------

dummy = copyV nFFT (-maxFloat)

neighbors = stencilV nFFT rbins

-----------------------------------------------

addV = zipWithV (+)

n = fromIntegral nFFTFor the integration stage (INT) presented in section 2.2.2.3 we extended the ForSyDe.Shallow.Utility library with a fir' skeleton and an interleaveSY process, similar to the ones developed for ForSyDe-Atom3. Thus we can implement this stage exactly as before:

int :: Signal (Beam (Range (Window RealData)))

-> Signal (Beam (Range (Window RealData)))

-> Signal (Beam (Range (Window RealData)))

-- int r l = firNet $ interleaveSY r l

-- where

-- firNet = zipCubeSY . mapCube (firSY mkIntCoefs) . unzipCubeSY

int r l = fir' addSC mulSC dlySC mkIntCoefs $ interleaveSY r l

where

addSC = comb2SY (zipWithCube (+))

mulSC c = combSY (mapCube (*c))

dlySC = delaySY (repeatCube 0)| Function | Original module | Package |

|---|---|---|

mapV, zipWith, zipWith[4/3], |

ForSyDe.Shallow.Core.Vector |

forsyde-shallow |

reduceV, lengthV, dropV, takeV, |

||

copyV, (<+>), stencilV |

||

mapMat, zipWithMat, transposeMat |

ForSyDe.Shallow.Utility.Matrix |

forsyde-shallow |

transposeCube |

ForSyDe.Shallow.Utility.Cube |

forsyde-shallow-extensions |

fir |

ForSyDe.Shallow.Utility.FIR |

forsyde-shallow |

fft |

ForSyDe.Shallow.Utility.DFT |

forsyde-shallow |

mav |

ForSyDe.Shallow.Utility.DSP |

forsyde-shallow-extensions |

combSY, comb2SY, mealySY, delaySY |

ForSyDe.Shallow.MoC.Synchronous |

forsyde-shallow |

interleaveSY |

ForSyDe.Shallow.Utility |

forsyde-shallow-extensions |

mkBeamConsts,mkPcCoefs, |

AESA.Coefs |

aesa-atom |

mkWeightCoefs, mkFirCoefs, maxFloat |

||

dElements, waveLength, nA, nB |

AESA.Params |

aesa-atom |

nFFT, nb |

3.1.4 The AESA Process Network

The process network is exactly the same as the one in section 2.2, but instantiating the locally defined components.

aesa :: Signal (Antenna (Window (Range CpxData )))

-> Signal (Beam (Range (Window RealData)))

aesa video = int rCfar lCfar

where

rCfar = cfar $ dfb oPc

lCfar = cfar $ dfb $ overlap oPc

oPc = pc $ dbf video3.2 Model Simulation Against Test Data

Similarly to the ForSyDe-Atom implementation, we have provided a runner to compile the model defined in section 2.2 within the AESA.CubesShallow module into an executable binary, in order to tests that it is sane. The same generator an plotter scripts can be used with this binary, so please read the project’s README file on how to compile and run the necessary software tools.

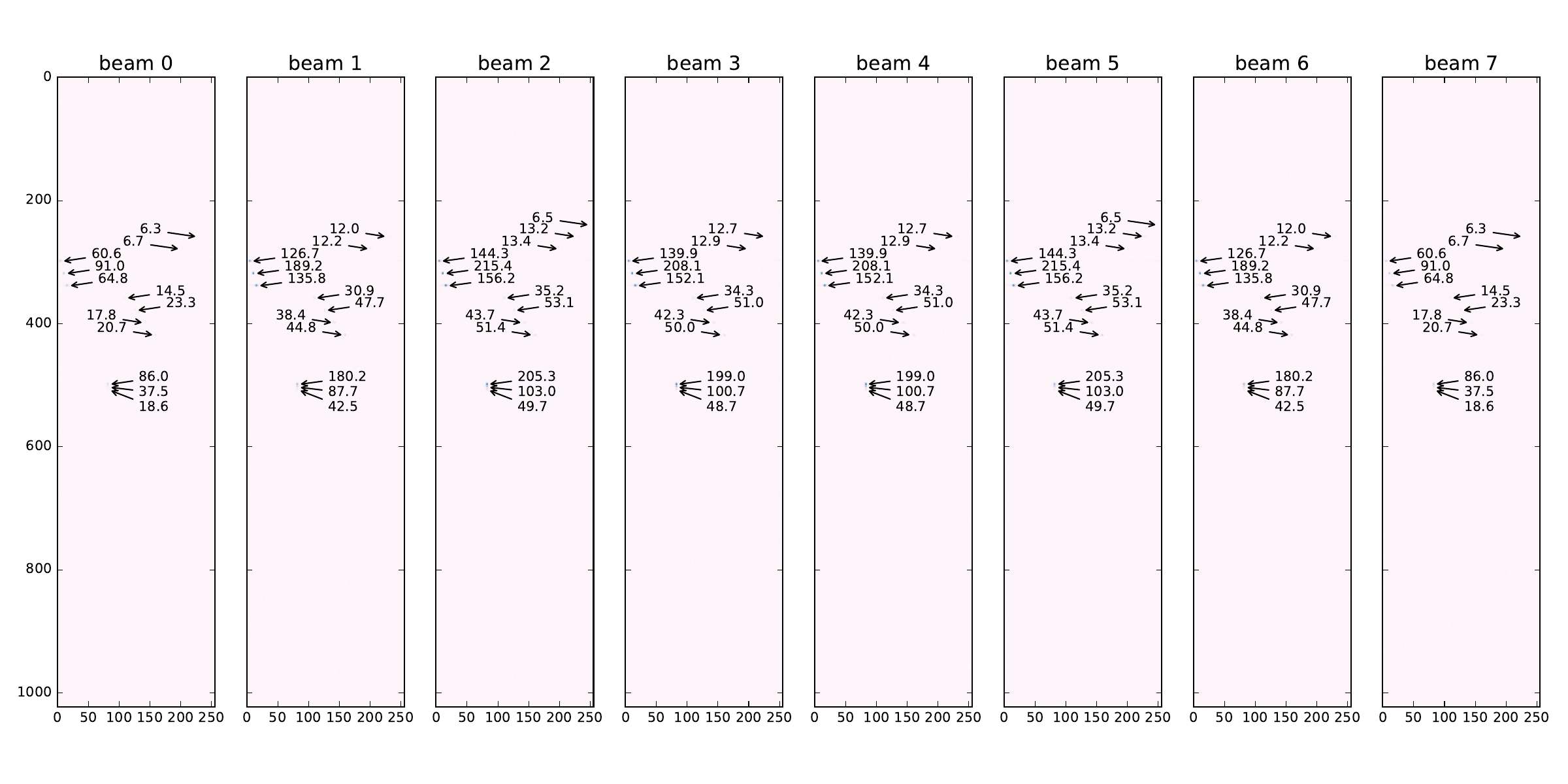

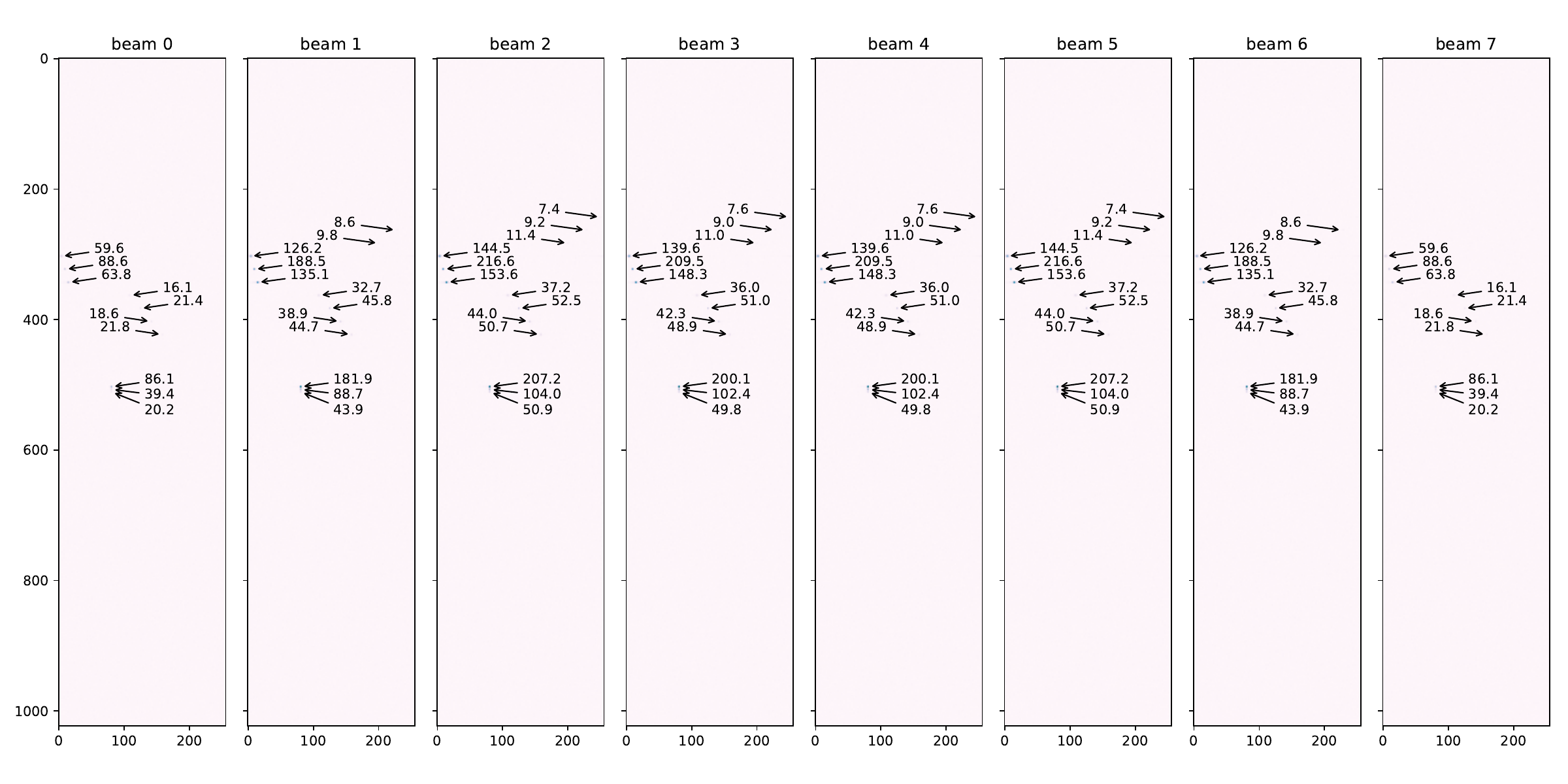

We use the same generated input data reflecting the 13 objects from tbl. 2, plotted in Figure 17 (a). This time the AESA radar processing output shows the picture in Figure 18.

Comparing Figure 18 with Figure 17 (b) one can see that the same objects have been identified, albeit with slightly different correlation values. This is because we use single-precision floating point Float as the base number representation in our model, which are well-known to be very sensitive to slight variations in arithmetic operations, as far as the order of evaluation is concerned. The output data graphs reflect the fact that ForSyDe-Shallow and ForSyDe-Atom have different implementation, but that is the only conclusion that can be drawn from this.

3.3 Conclusion

In this section we have presented an alternative implementation of the AESA signal processing high-level model in the ForSyDe-Shallow modeling framework. We have deliberately implemented the same model in order to highlight the API differences between ForSyDe-Atom and ForSyDe-Shallow.

The next sections will only use ForSyDe-Atom as the main modeling framework, however users should hopefully have no trouble switching between their modeling library of choice. The insights in the API of ForSyDe-Shallow will also be useful in section 7 when we introduce ForSyDe-Deep, since the latter has similar constructs.

4 Modeling the Radar Environment

In this section we model the input signals describing the radar environment, containing analog information about reflected objects, respectivey the digital acquisition of those signals. The purpose of this section is to introduce new modeling “tools” in ForSyDe-Atom: the continuous time (CT) MoC for describing signals that evolve continuously in time, and a new layer, the Probability layer, for describing random values which are distributed with a certain probability. This section can be read independently from the rest.

| Package | aesa-atom-0.3.1 | path: ./aesa-atom/README.md |

| Deps | forsyde-atom-0.2.2 | url: https://forsyde.github.io/forsyde-atom/api/ |

| Bin | aesa-atom | usage: aesa-atom -g[NUM] -d (see --help) |

The Probability layer and the CT MoC are highlights of the functional implementation of ForSyDe-Atom. Both are represented by paradigms which describe dynamics over continuous domains. However computers, being inherently discrete machines, cannot represent continuums, hence any computer-aided modeling attempt is heavily dependent on numerical methods and discrete “interpretations” of continuous domains (e.g. sampled data). In doing so, we are not only forcing to alter the problem, which might by analytical in nature to a numerical one, but might also unknowingly propagate undesired and chaotic behavior given rise by the fundamental incompatibilities of these two representations. The interested reader is strongly advised to read recent work on cyber-physical systems such as (Edward A Lee 2016; Bourke and Pouzet 2013) to understand these phenomenons.

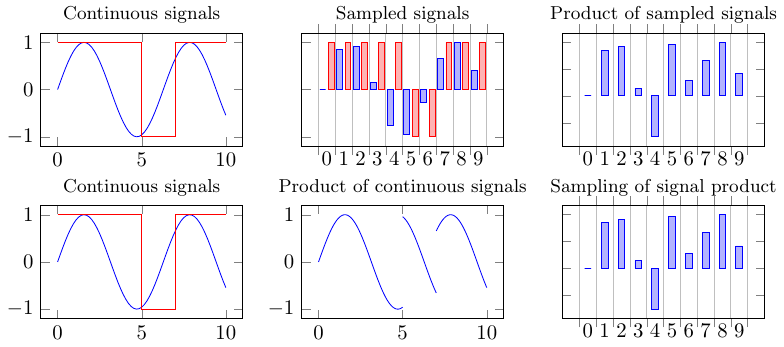

Being computer programs ForSyDe-Atom models are still dependent on numerical representations, however ForSyDe-Atom deals with this by simply abstracting away the continuous domain (e.g. time) as a function argument. Therefore during modeling, any trasformation can be described as a composition of pure functions. The numerical representation of the domain becomes apparent only at the end, when a model is being evaluated, e.g. when a signal is being plotted, in which case the pure function resulted from a chain of transformations is being evaluated for a certain experiment. This principle is depicted in Figure 19, and is enforced by the host language’s (i.e.e Haskell’s) lazy evaluation mechanism.

In ForSyDe-Atom, CT is defined in the MoC layer, and is describing (discrete) timed interactions between continuous sub-signals. Implementation details and example usage are found at (Ungureanu, Medeiros, and Sander 2018). In CT signals each event is described by a discrete tag and a function of time: the tags describe a simple algebra of discrete interactions, whereas event interactions themselves are simply function compositions.

In probability theory on the other hand, an observed value is described by a certain distribution (e.g. normal, uniform), which is a function of a random experiment. Similarly to the CT MoC, the Probability layer represents values by their “recipe” to achieve a desired distribution, whereas the algebra defined by this layer is describing the composition of such recipes. When we need to evaluate a system though, e.g. to plot/trace the behavior, we need to feed the transformed recipe with a (pseudo)-random seed in order to obtain an experimental value.

The radar environment model is found in the AESA.Radar module in aesa-atom package. This module is by default bypassed by the AESA signal processing executable, but if invoked manually, it recreates the AESA radar input for the same 13 object reflections seen in Figure 17 (a), and feeds this input to the AESA signal processing chain. The functions generating this data are presented as follows.

For this model we import the following MoC libraries: CT defines discrete interactions between continuous sub-domains, DE defines the discrete event MoC, which in this case is only used as a conduit MoC, and SY which defines the synchronous reactive MoC, on which the AESA signal processing chain operates. From the Skeleton layer we import Vector. From the Probability layer we import the Normal distribution to represent white noise.

import qualified ForSyDe.Atom.MoC.Time as T

import ForSyDe.Atom.MoC.CT as CT

import ForSyDe.Atom.MoC.DE as DE

import ForSyDe.Atom.MoC.SY as SY

import ForSyDe.Atom.Skel.FastVector as V

import ForSyDe.Atom.Prob.Normal as NOther necessary utilities are also imported.

Since we model high frequency signals, we want to avoid unnecessary quantization errors when representing time instants, therefore we choose a more appropriate representation for timestamps, as Rational numbers. We thus define two aliases CTSignal and DESignal to represent CT respectively DE signals with Rational timestamps.

type CTSignal a = CT.SignalBase Time Time a

type DESignal a = DE.SignalBase Time a4.1 Object Reflection Model

The beamforming principle, illustrated in Figure 20 and briefly presented in section 2.2.2.1 allows to extract both distance and speed information by cross-checking the information carried by a reflection signal as seen by multiple antenna elements.

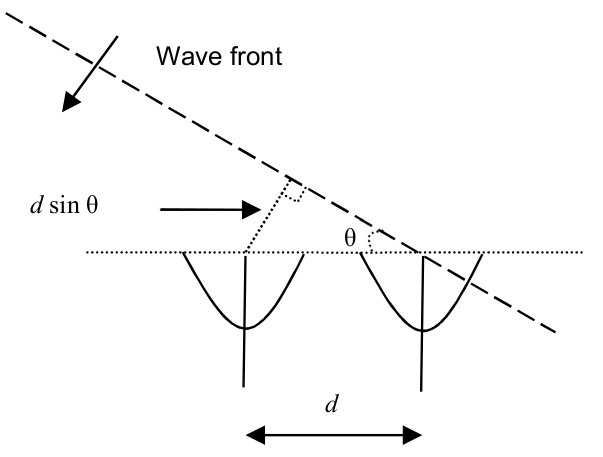

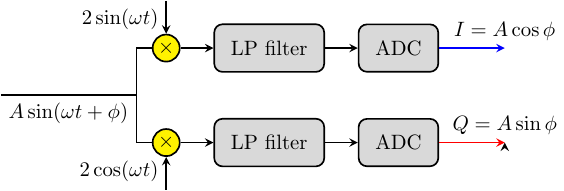

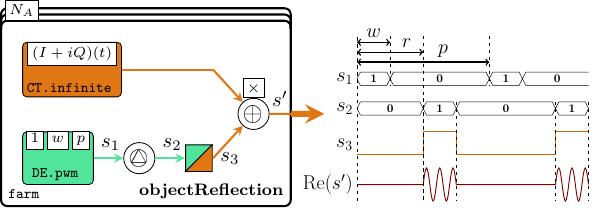

According to basic radar principles, an object is detected by sending carrier pulse wave (usually in GHz band, pulse width , period ), and decoding the cumulative information from the returning signal, such as reflection time or phase modulation . In an AESA radar, the constructive interference created by multiple simultaneous detections (see Figure 20) infers information about direction or speed. Each antenna element is extracting phase information as complex numbers where and , which are then samples them and stores them into range bins, like in Figure 22.

We model the radar environment by recreating, in CT domain, the image of signals reflected by arbitrary objects, as perceived by every antenna element. Since many of the calculations are time-dependent, we need a high precision for the number representation. Therefore, as with the time representation, the coefficients representing the radar physics need to be defined as rationals (and not just converted), hence we define ' alternatives to the coefficients in AESA.Params.

freqRadar' = 10e9 :: Rational

waveLength' = 3e8 / freqRadar'

dElements' = waveLength' / 2

fSampling' = 3e6 :: Rational

pulseWidth' = 1e-6 :: Rational

sampPeriod' = 1 / fSampling' :: Rational

pulsePeriod' = sampPeriod' * realToFrac nbWe approach modeling of the object reflection signals from two perspectives: the first one is a simple “translation” of a numerical program, e.g. written in Matlab or Python, where we only abstract away the time representation as a function argument; the second one is a more proper description of the signal transformations and interactions through CT processes.

4.1.1 Approach 1: Translating a Numerical Program

In this approach we simply translate the Python script used to generate the AESA radar indata for the previous sections, included in this project source files. We do this in order to familiarize with the concept of continuum in ForSyDe as simply functions over (an abstract representation of) time. This way any numerical program can become a CT signal by defining it as a function which exposes the time variable as an argument and passes it to an infinite signal generator.

The following function describes the value in time of the impulses reflected from a specific object with certain characteristics (see arguments list).

reflectionFunc :: Float -- ^ initial phase, random between [0,2pi)

-> Float -- ^ object distance from radar, in meters

-> Float -- ^ $\theta$, angle relative to the radar element

-> Float -- ^ relative speed in m/s. Positive speed means approaching object

-> Integer -- ^ signal power

-> Int -- ^ index of the antenna element in [0..nA]

-> T.Time -- ^ Abstract time representation. Evaluated only when plotting

-> Complex Float -- ^ Value of reflection signal for an antenna element (t)

reflectionFunc phi distance angle relativeSpeed signalPower chanIx t

| range_bin >= trefl_start && range_bin <= trefl_stop && not crossing_reflection = value

| not (range_bin >= trefl_start && range_bin <= trefl_stop) && crossing_reflection = value

| otherwise = 0

where

i' = realToFrac chanIx

t' = realToFrac t

-- wd is 2*pi*doppler frequency

wd = 2 * pi * relativeSpeed / waveLength

-- A is the power of the reflected signal (-5 => 1/32 of fullscale)

bigA = 2 ^^ signalPower

-- Large distances will fold to lower ones, assume infinite sequences

-- Otherwise the the first X pulses would be absent

trefl_start = ceiling ((2 * distance / 3e8) * fSampling) `mod` nb

trefl_stop = ceiling ((2 * distance / 3e8 + pulseWidth) * fSampling) `mod` nb

range_bin = ceiling (t' * fSampling) `mod` nb

-- Handling for distances at the edge of the

crossing_reflection = trefl_stop < trefl_start

-- Models the delay between the first antenna element and the current one

channelDelay = (-1) * i' * pi * sin angle

bigI = bigA * cos (wd * t' + phi)

bigQ = (-1) * bigA * sin (wd * t' + phi)

value = (bigI :+ bigQ) * (cos channelDelay :+ sin channelDelay)The reflectionFunc function is then passed to a CT.infinite1 process constructor which generates an infinite signal. The object reflection on all antennas in a AESA system is constructed with a V.farm11 skeleton, which instantiates each signal generator characteristic function according to its antenna index.

objectReflection :: Float -> Float -> Float -> Float -> Integer

-> Vector (CTSignal (Complex Float))

objectReflection radix distance angle relativeSpeed power

= V.farm11 channelRefl (vector [0..nA-1])

where phi_start = 2 * pi * radix / 360

channelRefl i = CT.infinite1

(reflectionFunc phi_start distance angle relativeSpeed power i)4.1.2 Approach 2: CT Signal Generators

The second approach combines two CT signals to create the reflection model: a pulse width modulation (PWM) signal modeling the radar pulses, and an envelope signal containing the object’s phase information as a function of time, like in Figure 22. The envelope function is shown below. Observe that it only describes angle and relative speed, but not distance, since the distance is a function of the reflection time.

reflectionEnvelope :: Float -- ^ initial phase, random between [0,2pi)

-> Float -- ^ $\theta$, angle relative to the radar element

-> Float -- ^ relative speed in m/s. Positive speed means approaching object

-> Integer -- ^ signal power

-> Int -- ^ index of the antenna element in [0..nA]

-> T.Time -- ^ Abstract time representation. Evaluated only when plotting

-> Complex Float -- ^ envelope for one antenna element

reflectionEnvelope phi angle relativeSpeed power chanIdx t

= (bigI :+ bigQ) * (cos channelDelay :+ sin channelDelay)

where

-- convert integer to floating point

i' = realToFrac chanIdx

-- convert "real" numbers to floating point (part of the spec)

t' = realToFrac t

-- wd is 2*pi*doppler frequency

wd = 2 * pi * relativeSpeed / waveLength

-- A is the power of the reflected signal (-5 => 1/32 of fullscale)

bigA = 2 ^^ power

channelDelay = (-1) * i' * pi * sin angle

bigI = bigA * cos (wd * t' + phi)

bigQ = (-1) * bigA * sin (wd * t' + phi)

The reflection signal in a channel is modeled like in Figure 23 as the product between a PWM signal and an envelope generator. The pwm generator is a specialized instance of a DE.state11 process, and the reflection time is controlled with a DE.delay process. DE.hold1 is a MoC interface which transforms a DE signal into a CT signal of constant sub-signals (see in Figure 23).

channelReflection' :: Float -> Float -> Float -> Float -> Integer

-> Int -> CTSignal (Complex Float)

channelReflection' phi distance angle relativeSpeed power chanIndex

-- delay the modulated pulse reflections according to the object distance.

-- until the first reflection is observed, the signal is constant 0

= CT.comb21 (*) pulseSig (modulationSig chanIndex)

where

-- convert floating point numbers to timestamp format

distance' = realToFrac distance

-- reflection time, given as timestamp

reflTime = 2 * distance' / 3e8

-- a discrete (infinite) PWM signal with amplitude 1, converted to CT domain

pulseSig = DE.hold1 $ DE.delay reflTime 0 $ DE.pwm pulseWidth' pulsePeriod'

-- an infinite CT signal describing the modulation for each channel

modulationSig = CT.infinite1 . reflectionEnvelope phi angle relativeSpeed powerFinally, we describe an object reflection in all channels similarly to the previous approach, namely as a farm of channelReflection processes.

objectReflection' :: Float -> Float -> Float -> Float -> Integer

-> Vector (CTSignal (Complex Float))

objectReflection' radix distance angle relativeSpeed power

= V.farm11 channelRefl (vector [0..nA-1])

where phi_start = 2 * pi * radix / 360

channelRefl =

channelReflection' phi_start distance angle relativeSpeed powerBoth approaches 1 and 2 are equivalent. The reader is free to test this statement using whatever method she chooses, but for the sake of readability we will only use approach 2 from now on, i.e. objectReflection'.

4.2 Sampling Noisy Data. Using Distributions.

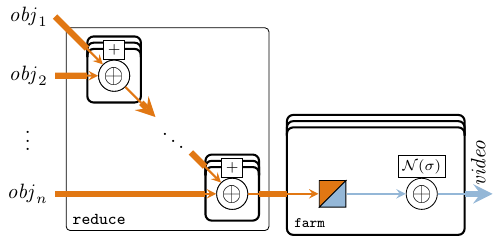

Multiple reflected objects are described as a vector of vectors of complex CT signals, each originating from its corresponding objectReflection' farm. To obtain the composite signal from multiple object reflections we sum-reduce all object reflection models, like in Figure 24.

reflectionMix :: Vector (Vector (CTSignal (Complex Float)))

-> Vector (CTSignal (Complex Float))

reflectionMix = (V.reduce . V.farm21 . CT.comb21) (+)

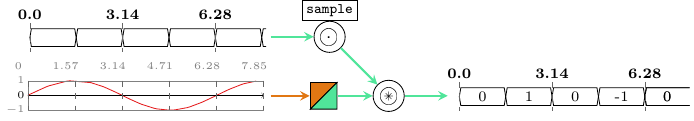

The next step is to sample the CT signals using an analog/digital converter (ADC) model. An ADC is characterized by:

a sampling rate, whose principle is illustrated in Figure 25. In our case we describe a CT/SY MoC interface as a serialization of CT/DE and DE/SY interfaces.

an additive white noise for each channel. White noise is a side-effect of the physical environment, and any sampled value is randomly-dependent on a particular experiment/observation. To describe random distributions in a pure setting, we wrap the object reflection SY samples into a

N.normalrecipe for generating normally-distributed observations with a standard deviation dependent on the power of the noise.

The N.normal wrapper is a function describing a Gaussian distribution, in our case the Box-Muller method to transform uniform distributions, and is dependent on a (pseudo-)random number generator, in our case StdGen. A StdGen is acquired outside the model as an IO action and is only passed to the model as an argument. By all means, these recipes (pure functions of “random seeds”) can be propagated and transformed throughout the AESA model, exactly in the same way as CT signals (pure functions of “time”), using atoms and patterns from to the Probability layer. However, for the scope of this model we sample these distributions immediately, because the AESA system is expecting numbers as video indata, not distributions. Hence our ADC model looks as follows:

adc :: Integer -- ^ noise power

-> DESignal () -- ^ global ADC sampler signal. Defines sampling rate

-> SY.Signal StdGen -- ^ signal of random generators used as "seeds"

-> CTSignal (Complex Float) -- ^ pure CT signal

-> SY.Signal (Complex Float) -- ^ noisy, sampled/observed SY signal

adc noisePow sampler seeds = probeSignal . addNoise . sampleAndHold

where

sampleAndHold = snd . DE.toSY1 . CT.sampDE1 sampler

addNoise = SY.comb11 (N.normal (2^^noisePow))